This post is the second half of Part III in our Bank of America foreclosure review whistleblower series. Part III focuses on how the confusion and high cost of the foreclosure reviews weren’t simply the result of overly ambitious targets and poor design, oversight, and implementation of the reviews. These reviews never could have been done properly due to significant gaps and inaccuracies in the borrower records at Bank of America. That meant the only possible course of action was a cover-up.

Here we’ll discuss:

“Garbage in-garbage out” problem of unintegrated, unreliable records

“Fire, aim, ready” approach to launching the tests

“Garbage in-garbage out” problem of unintegrated, unreliable records

The foreclosure review revealed one of the root problems of the foreclosure crisis: unreliable, difficult to use, and in too many cases incomplete records.

Let’s start by understanding the difficulty of the task even if everything had been in good order. We’ve taken this snapshot from the Excel training model for the E and F tests, which were on fees (see here to access the full model on ScribD or scroll down to the embedded version later in this post). This shows the top part of the computer screen reviewers would use to perform their work.

Each of the blue boxes is a separate system. To complete any of the B through G tests, a reviewer typically needed to access seven to ten of these systems. There were no subsets of borrowers for whom all the information could be retrieved via one system.

Having to go across so many systems to get basic information about a borrower is a sign of poorly integrated and managed computer systems. This problem alone made it costly and difficult to do the reviews, and greatly increased the odds that important information would be overlooked or not captured properly from one of the systems and entered into CaseTracker, a separate package used to perform the reviews.

The former Countrywide systems were the backbone of the Bank of America servicing platform. There were often gaps, even gaping chasms, in the information available on borrowers who did not have Countrywide-originated loans. For instance, comparatively little information could be retrieved on some mortgages Bank of America was servicing due to having acquired servicers, such as First Franklin (via Merrill Lynch), Wilshire*, and Nationspoint. As one reviewer stated, “on these files, proper review definitely could not be done.” That meant that Promontory and Bank of America would reject their allegations of harm, since they would not be able to find relevant information.

Even Bank of America legacy loans had important information that could not be retrieved via the systems shown above. The data from old Bank of America borrowers had been uploaded into the Countrywide systems in early October 2008, a few months after the Countrywide acquisition closed. As one reviewer described it:

Prior to the merger we were not able to access Bank of America servicing information. We could request a payment history from the old system, MSP, but we didn’t get everything and we could not verify it.

This was the same for the companies that were acquired by Bank of America. There was a large amount of information missing. There were times with these files, legacy Bank of America, Wilshire, First Franklin, etc. where we could not get servicing information, modification, invoices from attorneys and at times we could not get the HUD1 settlement statement that was used to close the loan, or the mortgage.

A third reviewer described how these information gaps undermined his work:

This created major problems in determining timelines. If a foreclosure started in 2008 I could not determine if procedures were followed at all. If modifications started prior to 2009 I was just out of luck to know if documents were received, signed, how and what modifications were offered. I had one case in which my notes actually started at the rescission of the sale and ended with the sale. I couldn’t determine anything about what had happened. Eventually that file was removed from my queue, because I had requested all notes from customer service, all notes on mods, all documents prior to the foreclosure sales deed and so on. The request became overwhelming.

That is not to say that reviewers were always fatally stymied when they requested information from the old Bank of America system, MSP. It could take anywhere from a day or two to over a month to get information back when it did come back. The output was pdfs, meaning images, from a DOS-prompt system. This sort of output was hard to work with and increased the odds of reviewer error.

Even where the records were complete and understandable, it’s not clear they had integrity. And it is important to understand that because the position Bank of America took in the reviews was that the major Countrywide system, AS400 (the same data was also in a more user-friendly system called LAMP) was above question. As a reviewer described it:

We were told that AS400 was the system of record and it trumped everything. I had one file with a modification the borrower agreed to and made payments on but it was not in the system so I spoke to my manager at Bank of America.

I was told “That’s the system of record. That’s what you use.”

I asked: “You are telling me if I have the promissory note and it shows a $100,000 mortgage and a 6% interest rate, and I can see it was entered into the system as $150,000 at an 8% rate, I’m supposed to use that?”

“Yes, that’s the system of record.”

Incorrect or questionable information was not a hypothetical issue. The information in AS400 was data that had been input manually. Not only did not contain images of the records from which the data entries had been made, reviewers could not locate images of documents stored or created by Countrywide elsewhere.

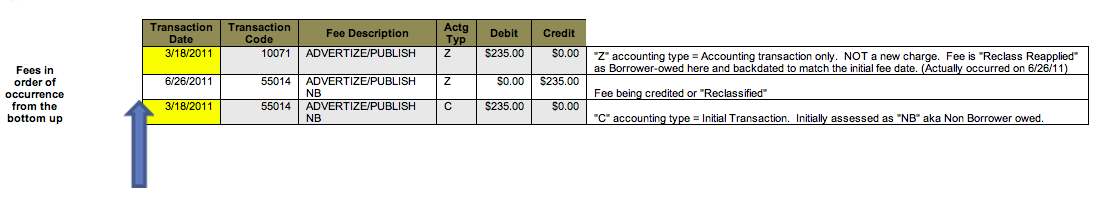

One major issue that illustrates the problems with relying on the data in the systems was reclassification of fees. Individuals working on tests on fees attest that on every single file they saw, fees were reclassified. Much of the time it was multiple reclassifications, from the borrower to the investor and back again, and on many files, it involved more than one fee. The reclassifications were so widespread that the training materials included sections on how to handle them, as the example below illustrates (click to enlarge):

One of the reviewers commented on the training example:

Not all reclassed fees were that simple. Some were reclassed and reapplied 4 or 5 times so they were very difficult to trail. (Borrower owed -> Investor -> Non Borrower -> Back to Borrower, etc.) We had to learn how to trail the fees for Test F because any fee that was owed by the borrower more than once in that trail would look like a duplicate due to backdating when it actually wasn’t.

Important information was also difficult to interpret:

Reviewer A: Well they simply gave us the notes that were in the system, which were often incomplete, sporadic – I mean, there were notes that came from India and incredibly difficult to understand, there were system notes from Countrywide, and it was incredibly difficult to decipher a lot of times simply because of the shorthand people were using – the Bank of America employees and the Countrywide employees were making up their own shorthand and sometimes it was very difficult to interpret exactly what was going on.

Yves Smith: Mmhmm.

RA: We would sometimes get together in little groups and say, “Hey, you read this and tell me what you think it says.” So. The notes came from everywhere and the systems were kind of a jumble.

Notice also that Bank of America relied on the Lender Processing Services Desktop system for its attorney information. A consultant to the foreclosure reviews said via e-mail:

At least at the B of A review, they went through the charade of assessing permissible/non-permissible fees…..at other reviewed servicers they didn’t even bother….reviewers were asked to accept LPS system generated invoices (as input by conflicted LPS network attorneys) at face value and accept them as legit with no questions asked. No coincidence that settlement negotiations ramped up about the same time (November) that reviewers with integrity were calling to review support behind inflated third party non-legal cost billings (service of process, title charges, publications costs, etc.), not to mention evaluating the attorney fees themselves against state and GSE limitations.

It was commonplace to see ”third party” (not) legal costs billed through the LPS system at multiples of market rates given the blatant conflicts of interest that existed between servicers, LPS, their network attorneys, and the entities providing the “non-legal” foreclosure related services.

Why do you think David Stern and others were eventually delisted by Freddie Mac? And it should not go unnoticed that the excessive charges were borne by far more than those foreclosed upon…… they were borne by those who had their loans modified, reinstated, or may even have paid them off…. or, by taxpayers when sale proceeds at foreclosure did not exceed GSE loan blaances. All of it, swept under the rug with the settlement, courtesy of our bank captured OCC. So just why is there an OCC? Main Street needs to demand an end to an agency that has long since ignored its interests.

Finally, one reviewer had the person sitting next to her add a note to a Countrywide borrower record by mistake. Even though that was likely an isolated case, it should have been impossible for that to occur, again indicating major system deficiencies.**

When Bank of America acquired Countrywide, it was considered to have the best systems of all major servicers. And as stunning as it sounds, Bank of America apparently comported itself better than most of its peers did in these reviews. Consider what the reviewers at the other banks must have encountered.

“Fire, aim, ready” approach to launching the tests

The implementation of the foreclosure reviews was even more confused than the underlying systems. Promontory and Bank of America ramped up well before the tests were ready to into production, which meant the temps sat around, after being trained, processing borrower records in a training mode, for weeks and for some, for months before the tests went into production.*** From a reviewer who joined at the end of January:

During the initial training week we were informed of tests A, B, C, D, E, F, and G. In that period we actually trained on the systems using test A to get us used to maneuvering in some of the systems. We were told that B and C were in the beta testing phase and would be ready for us to work shortly after we got to the floor. For the first few weeks we trained and then were certified on Test A. Then we Level IIIs began training on Test C and Level IIs then trained on Test B. Once we were certified on C we went live, so by early April I was live on C.

This was about as efficient as it ever got, and even this example was not that efficient. With five weeks maximum to train and certify a reviewer on a set of tests, this end of January hire could have been processing files in early March but wasn’t until early April. He was in the first group to work on Test C, so other Level IIIs hired with or before him had even more unproductive time.**** In addition, some of the Level II reviewers were moved back and forth between the B and D tests (one set of assignments) and the E and F tests (another set) which meant they wound up being trained on all four, resulting in two to three weeks of superfluous training and certification.

The Level IIs tasked to E and F test faced even more makework. From a different contractor hired at the end of January:

Yves Smith: When did they add the new tests? They added the E and F tests roughly when?

Reviewer B: I want to say about April.

YS: Okay.

RB: They – it was already in the system but none of us really got a chance to see it. We were told that the resource guides that we were to follow by the book, question by question, every single time we opened it, we were told that one for E and F had not been created yet. And then when it was released it was very vague and was not even something that we could use that would give us any guidance.

YS: Mmhmm.

RB: So, you know, we, I sat from probab– well, I didn’t actually get to become an alive environment until August. So I literally sat for seven months waiting, you know, for these resource guides to be completed and waiting for the tests to be approved, I guess from everyone, so that we could actually go live.

YS: What ____ [crosstalk]…What were you doing for those seven months?

RB: Looked at – well, we could still pull loans in CaseTracker, we were just in a training environment, and so whatever work you did, every night at midnight would just be wiped out and disappear when you were in a training environment. So we would have to pull loans and kind of go through the test questions and get familiar with, you know, what type of questions were being asked. And that’s really about it. They never came around to say, you know, to provide more training –

YS: So you did no useful work for seven months?

RB: Yes. It was miserable.

One of the reasons E and F tests were so slow to be launched was that they were revised numerous times. These questions from E Test are part of the tenth iteration; see the last two worksheet tabs of the Excel model workbook below:

Bank of America Foreclosure Reviews – Model for E and F Tests by

G test, which was on modifications, one of the most likely sources of valid homeowner complaints, given the widespread reports of HAMP modification abuses, also did not go into production until August. From a Level III reviewer (recall that Level IIIs worked only on Tests C and G):

C [test for certain dual tracking and certain default-to-sale timeline violations of the mortgage] was in production first. As for test G, it went live in August. Up to that point, once C was basically over, everyone was either doing busy work and completely worthless spread sheets supposedly for G test. Everyone with any underwriting experience knew these spread sheets had no relevance to the task at hand. But it kept everyone busy.

Another reason the tests were delayed considerably was that the reviewers, who were apparently more diligent than the bank anticipated, asked for guidance on the often inexact questions and deficient resource guides. These queries were escalated first to Bank of America (problematic, particularly since the unit leaders were from Countrywide) and sometimes to Promontory. Since several reviewers often raise the same issue separately, they could and often did get conflicting answers to basic questions, such as “Does a modification cure default?” In other cases, questions led to further changes in the tests or test instructions.

And these problems were common, as one reviewer recounted:

The tests were delayed for a couple of reasons, one was some questions were ambiguous. For example: Was the borrower in a permanent modification at the time of foreclosure?

While that question does not sound ambiguous, the instructions for answering the question made it that way. Example: the original instructions told us that a payment on the modification had to have been made to be a valid modification. However, different modification program documents said different things about what made a modification valid. Some said that a payment must be sent with the signed and notarized modification agreement. Others simply said for the modification to be in effect signed and notarized documents had to be returned. The instructions changed constantly. At one point it was that a payment needed to have been received on time, then it became a payment had to be received and accepted,

then it became fully executed (borrower and bank) and a payment, then it was an offer had to have been made (that may not be chronological) so the instructions made the question ambiguous.Other questions that became ‘ambiguous’ were question regarding publication dates. That is, newspaper publications of sale information and then the postponement of a sale. Some states required repeated publication of sale information after a postponement, others required on the notice just before sale, some required only a filing of notice of sale with the court. So in determining a foreclosure timeline the question could lead to all kinds of rabbit trails (as well it should) as to whether the time line was proper. Again, instructions changed repeatedly and more often than not the PC [proficiency coach] simply decided whether or not they wanted you on a particular rabbit trail and what was a proper timeline or not.

Another issue for the questions was that made a question ‘ambiguous’ were state and program specifics. A question in G and I am paraphrasing asked if the modification offered was one that the borrower was actually eligible for based on the specifics of his/her loan type. That question led to state specific information [e.g.: Hardest Hit Programs] since Michigan had certain criteria but so did all the other states so that question became very time consuming since we needed to know what Fannie Mae/Freddie/FHA/VA/conventional/state issues had to be covered but we did not necessarily have all the resources [resource guides] to cover that.

Moreover, it appears that completed reviews were not reopened in light of problems exposed later. A whistleblower explains:

The question of rescore/correction was a big issue. This was especially true of questions on Test C regarding trial mods/permanent mods/forbearance. The instructions changed often and many times we complained that our answers on

previous cases were now wrong. I cannot remember ever getting a test back because the details on how to answer the question had now changed and were clarified, rendering our previous determinations on that question incorrect. We were simply told not to worry about it.

Consider what these sorry accounts show:

For many of the foreclosures included in the review, records were woefully incomplete, had critical information that was scattered and hard to interpret and integrate, and some of the information, such as attorney charges from LPS, was suspect.

Bank of America and Promontory routinely had trouble providing directions and coming up with consistent answers to questions about the loans, sometimes extremely basic ones like “does a modification cure default?”

The implications of these failings go well beyond the aborted foreclosure reviews. They demonstrate how the most important asset most families ever own, a home that they acquire using a mortgage, winds up in the hands of servicers whose records are all too often inaccurate and incomplete, and where the servicers, even years after the fact, are unsure of how they should have operated to satisfy regulatory and contractual requirements. That’s before we get to how the servicers game systems. We discuss that issue in the context of the foreclosure reviews in our next offering, Part IV, on how Bank of America sought to minimize evidence of damage to borrowers.

________________

*Wilshire was acquired by IBM on March 1, 2010. However, Wilshire was not a party to the OCC consent orders but Bank of America was. So if a borrower was foreclosed on in 2009, which was while Wilshire was owned by Bank of America, and asked Bank of America for a review, Bank of America would have had to obtain the records from IBM. The reviewers in contact with the members of the “non-I-series” team tasked to these problem children were not aware of measures to obtain information from third parties.

** The reviewers in Tampa Bay were handling only complaints involving completed foreclosures, so even if this lapse was material, it would undermine the integrity of the review but not result in other harm to the borrower. We did not hear of any gaffes that affected payment records. If this sort of mistake was possible and took place, meaning reviewers were accidentally adding credits or charges to a post-sale account, it would be a trailing charge or credit to a GSE or private label trust.

*** The reviewers speculated that some of their work in training mode may have been used to refine the tests. Even so, any debugging could easily have been accomplished with the 120 to 150 Bank of America employees from the shuttered correspondent lending department in Tampa Bay who were also assigned to this effort.

**** We refer to all reviewers in this series as male irrespective of gender. In some interviews, another interviewer participated with YS; for simplicity, and at the request of the other interviewer, all interviewers are referred to as YS.

Ah yes….journalism….I remember this from a distant past. How refreshing!

What could we call it? The manufacture of incompetence?

Ever since Christ muttered the words “Forgive them for they know not what they do,” intentional and deliberate ignorance and incompetence have become the stock-in-trade of criminals.

In one sense it is incompetence, but as I have said before, in the one place where it counted — getting the criminals involved off the hook — this operation was a well oiled machine.

The manufacture of incompetence – as a description of the result I love it; as a directive, it is useless.

my initial comment was fragged hehehe my reference to A.Nin aside, You are Excellence in Motion Yves

AH-HA! my comment is blocked due to cross referencing occ & promontory managerial & legal incest

this link/info seems to be Blocked:

stopforclosuredotcom 1.14.2013

the occ and promontory are now trading employees. julie williams (19yrs at occ) to promontory and amy friend (2yrs at promontory) to occ

No, it wasn’t. I just checked the comment queues. There is an intermittent bug in WP that drops comments after they have been submitted.

Some of the anecdotes given by these reviewers sounds like something out of a modern Catch-22 novel (maybe someone could write a similar book, except instead of satirizing war, they do finance). For example:

– “You are telling me if I have the promissory note and it shows a $100,000 mortgage and a 6% interest rate, and I can see it was entered into the system as $150,000 at an 8% rate, I’m supposed to use that?”

– “Yes, that’s the system of record.”

We’re getting into Kafka territory here.

That particular anecdote also shows a deliberate policy of illegality. The signed documents filed at county courthouses are, under the law, the records *of record*.

BoA’s garbage computer system is not anything of record. Telling people to act as if it is — well, that’s a direct order to violate the law.

Incredible investigative reporting. Damn you are through.

I wanna have a good laugh when some PR person from BoA tries to put the spin on this.

I presume there is no recourse here? Perhaps there’s light at the end of the tunnel, but what will it take to get there?

“Oh what a tangled web we weave, when first we practice to deceive.”

BoA is a tangled Gordian knot which no amount of regulation, review, or reform is ever going to unravel. The knot must be cut through. Send in 1000 or so FBI agents, arrest, interview and indict all the way to the top, and put BoA itself into administration.

Start today.

500 FBI agents were investigating rampant financial fraud in 2004 and warned that the whole financial system was in jeopardy. Then George Bush took them off the case and moved them to work on issues of homeland security.

It doesn’t matter who you send or how many…they all fall under the King Midas Spell…the head of the FBI in CHarlotte just “retired” from teh FBI and went to work for Bofa.

This should not be allowed. Law enforcement officials should be prohibited from working in financial institutions. Existing former law enforcement in the banks should be unilaterally removed.

This is BoA, the most serious of the pack.

This whole story makes me think of Sherlock Holmes, who was so worried about a dog that didn’t bark. Where are our usual trolls? no pushback? no comments elsewhere/MSM?

Another proof how serious the crime is. Yves, watch your steps.

America is caught in a confidence or credibility trap, in which the changes, investigations, and reforms necessary to restore trust to an economy or market are rendered unlikely because doing so would expose a pervasive corruption that the principals fear would destroy any remaining trust. It could also endanger the careers of politicians and business people who may have permitted and even appeared to facilitate the control fraud that cause the financial crisis in the first place. Personal risk trumps public stewardship.

“Government should be run like a business.” What we’re learning about the IT systems in this series of posts should put a stake in the heart of that slogan forever.

Bingo. imagine languishing around for 7 months waiting to jump from one field to the next…in our corporate special circle of hell environment!?!

So…….. any invites to have a chat with the top dogs at the corrupt enterprises yet? Or how about Mr. Joseph Smith?

Yves and Mr. Smith go to Washington? Now that’s a movie that will never be made…..

Aaaaand its gone!

Having intentionally non-productive labor was not a mistake by Promontory – It has been in my experience (I view it as a fraud and theft of services) that Promontory bill client at a specified hourly rate for their contract worker level….lets say $200hr but, when you look at what they pay the company providing the worker its…say $65hr and what the worker gets is $45hr. Another words, Promontory makes big bucks on bodies present….they are incentivised to pile dead weight into the billing. You will find this (oops – Promontory does not have to disclose for labor or collateral s) goes on with supplies, programs used, rentals, per diems.

The net effect is that a contract may read like a reasonable simple overhead and profit type but, when you look at how much is made – blows the mind away. Change orders and confusion become highly profitable. As you can see, the banks can claim – oh this costs too much and, at the same time – have a great deal of control over the data produced. The type of contract Promotory has leads to vast areas of fraud, double dealing and backroom control of results obtained. Would not at all be suprised to find skimming going on and bribes abounding. Saw this type of fraud and contracts in aftermath of Katrina. The key to uncovering this is to get the back-up to all monies exchanged in the contract against the invoices issued – that back-up is protected in Promotory contract but may be peirced.

Just my two cents on the matter

Please look at our IIIA post (this stuff is dense, so I can understand why you might have missed it).

The contractors at Tampa Bay were hired by Bank of America, not Promontory. Materials sent by the agencies to the temps refer to Bank of America as their client.

Did not want to add another layer of mess but, where these guys got paid from directly and whether they were employee’s is a question for the IRS to determine. The BofA being highly lawyer-ed up would have driven the line carefully.

The huge exercise of control over data and personnel by BofA may be their undoing.

The main point being that none of the missing information and mishandling was a mistake – The players are making a defense of higher crimes and misdemeanors while the prosecution is trying to prove – hand slaps are the only required steps.

It is a mess – but, in order for BofA to comply – they had to hire ‘independent’ and qualified individuals under OCC guidelines of qualifications – Just my thinking. To determine (and it was a ruse used in Katrina contracting) whether BofA hired these guys, you would have to look at certified payroll or whether these guys had a independent contract with BofA where BofA not required to produce a 1099 to employee (that would come from contract labor company) or W2(employee). I did not see BofA limited to Promontory under OCC agreement.

Independent contractors were to be approved by the OCC. There was a process because they needed to check for conflicts of interest. This even extended to subcontractors (Allonhill, which was a subcontractor IIRC at Wells).

The only place where low level workers were contemplated to be hired in the BofA reviews was in the Promontory engagement letter, as staffers to PROMONTORY.

The consent order specified that all the key issues in the foreclosure review were to be performed by independent contractors. The temps were working side by side with BofA employees (in similar teams) who underwent the same training as BofA staffers doing the same tasks. There is absolutely no way they can be presented as independent contractors as envisaged in the OCC order. More important, neither BofA nor Promotory has every tried to pretend otherwise.

Re the IRS, these were temps, they were paid by an agency and all HR stuff went through the agency. There was even a full time temp coordinator (liaison to the agencies) on site in Tampa Bay.

Bring it in front of a judge in every county in every state. Dirt is a state jurisdiction. Bring every conceivable case now. There is no reason to coddle banksters. Kill them in the cradle.

Thanks for the postings.

I will do what I can to get them read widely and hope it is the log that breaks the dam of financial insolence.

How about one link to all the parts????? please and thank you.

Great job, Yves. Can’t wait for the next installment. What a clusterf**k!

I have major problems with the shutdown of this review process. First off, I was a shoe-in for the rescission with a $125K penalty, as I perfectly fit all of the criteria of the OCC’s guidelines and then some. I’ve been waiting for nearly two years to finally get some satisfaction from this review. If you knew a tenth of what BofA put me through for five years, you’d understand. Their newly revised figure of $875 in restitution won’t even cover the parking fees I incurred. Unbelievable!

Now, not only is this entire program scrapped, but I had absolutely NO IDEA that the OCC was going to share all of my detailed information with the same bank that I’m in litigation against. That blows my mind…..that they’d share my entire case file with what I consider to be the most sinister institution in the United States. I would never have imagined (nor agreed) that what I thought was going to be reviewed by a disinterested third party would be handed over to these assholes who will do, say, or fabricate anything in court to further their agenda. Is the sharing of my files with my adversary actionable?

Nobody was a “shoe-in”

What part of Potemkin — as in fake — program do you not understand?

Yves, this is all exemplary work. Being a foolish peon I can’t track everything you have compiled, but this really stood out for me:

“…As one reviewer stated, “on these files, proper review definitely could not be done.” That meant that Promontory and Bank of America would reject their allegations of harm, since they would not be able to find relevant information…”

‘These files’ were the ones from mortgage companies which BoA had acquired – certainly it was my experience from the 90’s on when I acquired my mortgage that it went through several banks before ending up at the big one. That means, had I been in difficulty there would have been no way to track back, so any harm I suffered had no way of being compensated – which would apply to huge numbers of people experiencing this shuffle.

Right there, it seems to me, the problems begin. When people lose their ss card or license or birthcertificate, they have to PAY to get a new one. At the very least, all mortgages in this difficulty should be compensated for the loss of their documentation by the bank which took on the responsibility. Stands to reason!

Agreed, good point (and one I was trying to figure out how to make myself).

“…As one reviewer stated, “on these files, proper review definitely could not be done.” That meant that Promontory and Bank of America would reject their allegations of harm, since they would not be able to find relevant information…”

This is ludicrous. Not being able to find relevant information IS harm, especially if you are legally obliged to provide it when requested. If it was this bad for reviewers, imagine what it must have been like for borrowers trying to figure out what was going on.

Great work. Thank you for more heavy lifting.

We needn’t worry the statues of limitations are expiring on securitization and origination crimes.

Bank foreclosure crime is happening right now — the only thing missing as always is the will to prosecute.

Great series Yves. Keep at it.

The OCC and Promontory apparently gave their maximum effort to the B of A review. Completion of reviews at other servicers would have been far less complex system-wise, and could have been managed to produce the meaningful and substantial borrower harm conclusions that were evident, had that truly been Promontory’s objective. Tellingly, however, reviews at many non-B of A review sites didn’t command even one appearance by a Promontory or OCC official in the course of the 12 month engagement. The primary purpose of the other servicer review sites appears to have been to produce maximum billable hours for Promontory, the future employer of choice for outgoing OCC senior execs.

Yves, I think it’s important to get to the bottom of what exactly is meant by the phrase “System of Record”.

I believe that it is being used improperly, and probably intentionaly, to refer to the legacy system which was home to Countrywide’s business applications.

”

Even where the records were complete and understandable, it’s not clear they had integrity. And it is important to understand that because the position Bank of America took in the reviews was that the major Countrywide system, AS400

We were told that AS400 was the system of record and it trumped everything. I had one file with a modification the borrower agreed to and made payments on but it was not in the system so I spoke to my manager at Bank of America.

I was told “That’s the system of record. That’s what you use.”

I asked: “You are telling me if I have the promissory note and it shows a $100,000 mortgage and a 6% interest rate, and I can see it was entered into the system as $150,000 at an 8% rate, I’m supposed to use that?”

“Yes, that’s the system of record.”

”

I believe that what they are in fact admitting is that the legacy Countrywide system, (AS400) was the last system known to contain reliable information, which is not actually a good definition of “System of Record”.

The “System of Record” should be the system to which you would turn to find the latest actual data representing your businesses activity.

What is being described here is the result of monumental, and unimaginable carelessness.

IOW, as far as the loans in question go, BOA had no real “System of Record” (or they had no intention of giving access to it) what they had was a vast tangle of systems linked to their fraudulant servicing operations, and a legacy system inherited from Countrywide, that had an accurate, but outdated record of the account’s details, dating to the date of Countrywide’s aquisition.

The legacy Countrywide AS400 system was probably home to the last ‘legal’ transactions related to the borrower’s loan, the rest of the systems would be home to the fraudulant transactions that had occured since BOA aquired the loans.

Sorry, I’ve really screwed up my post;

The “System of Record” should be the system to which you would turn to find the latest actual data representing your businesses activity, what people owe you, and what you owe others.

A static, outdated system that does not hold data reflecting current reality cannot by definition be a “System of Record”

What the legacy Countrywide AS400 probably represents is a snapshot of where it was before it all started to go so terribly wrong.

Thanks for these posts. These reviews cover only one part of the financial sector-bank-mortgage-securization scandal. What we see is a pattern of a corrupt government going through the motions having criminal organization A investigate criminal organization B (and where criminal organization A often hires criminal organization B to do the investigation). This is actually worse than what many of us grew up hearing referred to as organized crime. For one thing, if the Mafia had been running these scams, they would have kept better books. For another, the Mafia could only by some corrupt cops and local judges whereas the bankster buy the Justice Department, Presidents, the Congress, and the whole alphabet soup of regulatory agencies.

Organized crime…………..

http://www.scribd.com/doc/121700023/Monkeys-Hear

I represent homeowners (or former homeowners) who have applied for modifications prior to losing their homes. The bank’s response, if the applicant is “lucky”: 1.offer of a forbearance agreement that other than the payment terms is ambiguous and contradictory e.g. “as long as you comply with the payment schedule, we will notify our counsel not to proceed to sell your home” vs “we do not give up our rights as stated in the mortgage agreement that allows us to proceed to sell your homes no matter what the forbearance agreement says.” Court rulings back the bank’s rights to sell. 2. Making all your forbearance payments on time only qualifies you to be eligible for a modification; it does not promise you one if we choose not to give it to you. 3 “Congratulations, you have been awarded a modification; you have previously agreed that if one is so awarded to you, you will pay 15k within 10 days of acceptance” (nothing in the forbearance agreement regarding paying this; apparently the bank put this in by mistake [I think]when it applied to a different forbearance agreement they offered others that said once you make all forb. payments, you will be offered a modification and will be current if when so awarded, you pay 15k additionally); “Your new modified mtg. provides you pay $3500 instead of the $3000 under your current agreement; (well, the monthy payment has been modified). All upheld by the courts. After all, we didn’t promise you a modification [rose garden]and if you don’t want the one we offered, that’s ok.

I could go on and on. In the process of applying for a modification, the bank (orally, nothing in writing as that would be admissible in court; an oral rep. is not)says at one point you make $1000 too much; six months later while still considering (the rep. says you make $4000 too much.) The eventual modification denial cites no figures; just that your financials don’t fall within the investor guidelines; very helpful, huh. Remember, no law says we have to give you a modification. Oh, and by the way, that language in our letter to you accompanying the forbearance agreement which said stay in touch with us while you’re making your forbearance payments so that once you complete them we will work out an installment payment schedule to make you current (but you said I would be current then; oh right, that was oral and not admissible); well, we know you called us regularly, and did your part; but we were under no obligation to inform you how much you owed to make you current and a installment schedule to get you there. See, that was only an agreement to make an agreement and is not an enforceable contract. And we regret to inform you that your house was sold last Thursday to the financial institution that held your second in order to protect their loan; you know, the one that told you last Wednesday they were granting you a modification cutting your monthly payment in half after you had paid them thousands to make yourself current on their loan, but didn’t tell you they would purchase your house the next day which you knew nothing about because they had no obligation to tell you and which you had no notice of because you thought you were current on our loan after making all your forbearance payments to us. If we can help you in the future please call us. We love doing business with you.

Thanks Yves for all your time and trouble. The housing crisis just seems to keep giving and giving, no matter how much the powers that be try to wish it away.

“Its Lambkin was a mason good

As ever built wi’ stane;

He built Lord Wearie’s castle

But payment gat he nane . . . ”

Hopefully you’ll have better luck. :)

Thanks, Yves. This is a nice antidote to BofA hometown paper The Charlotte Observer, who in the last few days have published a fawning interview with Moynihan and his triumphant appearance in Davos, an breathless account of the two new BofA board members, and, today how foreclosures are DOWN and prices UP in N. Carolina!!! YAY! Nevermind I was served by the sheriff last week (to testify Re: a hit and run I witnessed – no irony there) who told me he had 40 FC notices to deliver just that day. Nothing to see here folks…

I suppose that in a country that still has sheriffs, and a common people boldly and baldly oppressed by the already rich and legally untouchable, Yves is rapidly taking on the mantle of Robin Hood.

Keep up the skillfully placed, always accurate arrows, Robin. Hit ’em where it hurts.

Did you tell the Sherriff to start checking those foreclosures for whether they were actually legal? … several Country Sherriffs have started doing that after seeing too much appalling fraud.

…well, you probably didn’t see the sherriff personally, though.

I actually chewed his ear off about it and he said that they KNEW most of them were fraud but that they had to deliver them anyway. Said most folks had already given up and vacated. Got same story from Register of Deeds – they have to file anything presented, no questions asked.

The truly shocking thing here – if shock still has meaning – is the fact that BofA is just one entity in an industry replete with more egregious examples of incompetence and racketeering – if that’s even possible. Still worse, this level of records mismanagement is endemic to the Fortune 1000 – at least the dozen or so companies I’ve personally audited. (Anecdotally, every business in the country with an annual revenue above $500M is being run by greedy, incompetent boobs who’ve been misled into believing that an MBA actually qualifies someone to be a “leader.”)

I really can’t discern if this is amateur or professional

60 Minutes needs to see this work you’ve done and interview you. Great job. After recognizing my own abuse in your details I don’t feel so crazy. One advantage about being 94 when my huge new mod is paid off (see my comment to Part 2) is that if you have dementia or die at least you will be free of BofA.

As one who tried to launch a “honest” modification business and went on to specialize in short sales, reading this simply made a “light bulb” come on. I knew I was constantly getting baloney and “off the cuff” reactions from the people I talked to at BofA. I frankly assumed that it was because management had instructed them to be ineffective. I did not realize their “systems” were so messed up that ineffective was all that they could be. . . . .

I simply learned to treat people right and appeal to their compassion rather than show disgust for the game playing and just hope they would “stick their neck out” a bit to help my client. . . . .