Google and Ascension look to be in boatloads of hot water over their secret patient medical data sharing project that involves “ten of millions” of patients. A day after the Wall Street Journal published its expose, which we discussed yesterday, the normally complacent Department of Health and Human Services roused itself to open an investigation. Congresscritters are upset too.

And if it so chooses, HHS can lower the hammer on Ascension and Google. The Guardian reports that Google and Ascension signed an agreement hours after the Journal broke its story. This is prima facie evidence that every bit of patient data that Ascension handed over to Google was a flagrant violation of the privacy provisions of HIPAA. And as we’ll show further down, leaked documents show the data-sharing was well along.

Under HIPAA Ascension can share patient data with “business associates” only if

There is a contract in place

There is a HIPAA-contemplated purpose for the data provision

The agreement assures that the business associate will implement practices to assure compliance with HIPAA.

Kinda hard to do any of that with no contract.

Finally, we’ll discuss why electronic health records and the idea of applying AI to medical data has been a pipe dream for decades and that situation is not likely to change any time soon. The better remedy for patients with complex medical histories would be for them to have much stronger rights to their data, including requiring medical providers as a default to provide electronic copies of test results and changes to medication in electronic form to patients promptly after each visit.

Why HHS Can Nail Ascension and Google

What went down between Google and Ascension is more brazen than I imagined, and I have an active imagination. First a quick review and an update on the state of play, starting with the high points from yesterday’s post on Google’s blockbuster story:

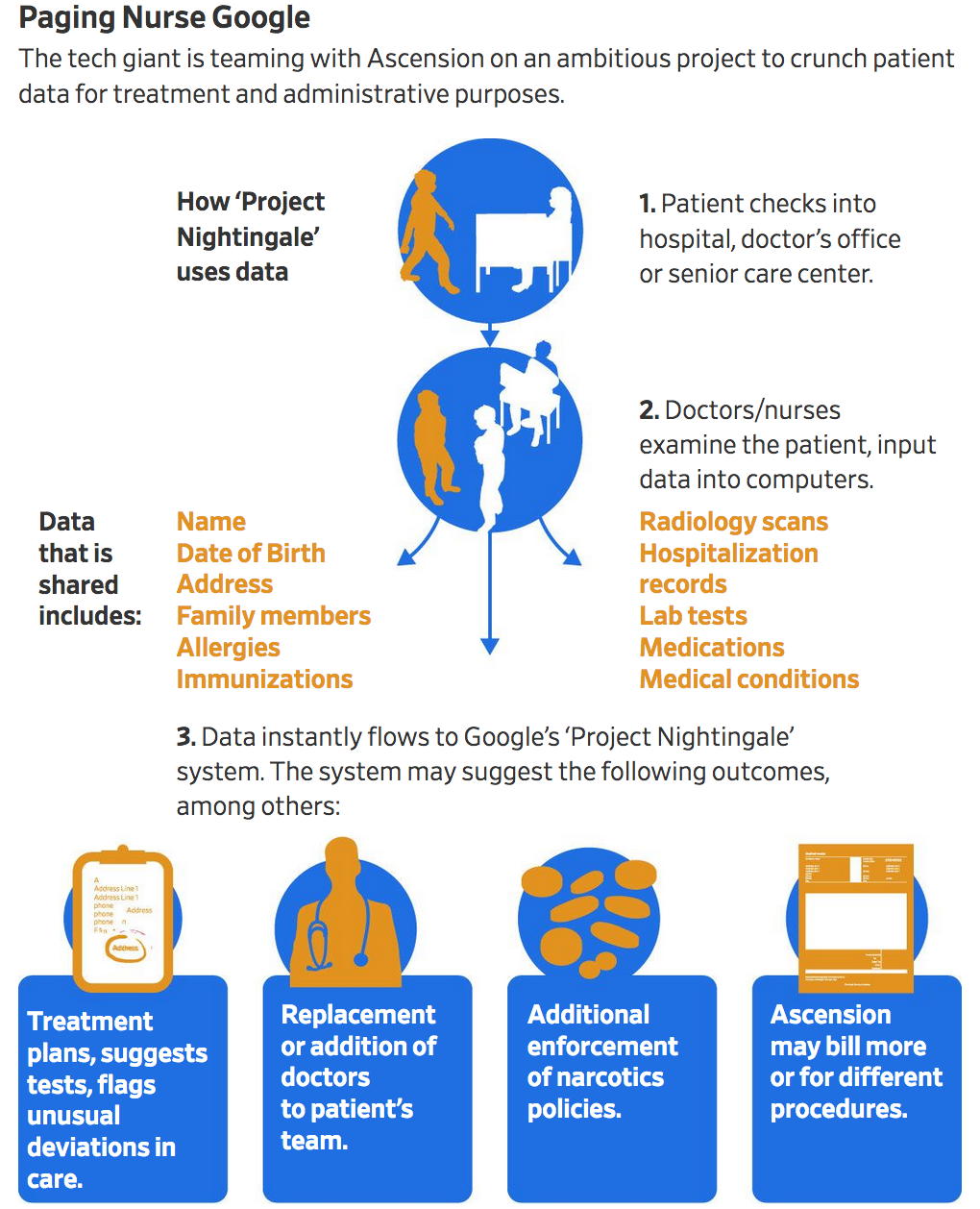

The Wall Street Journal has broken an important story on Google’s foray into the medical arena. Without notifying patients or doctors, much the less obtaining their consent, the search giant has obtained the medical records of “tens of millions of people” in 21 states, all patients of Ascension, a St. Louis-based chain of 2600 hospitals.

Moreover, you can see that the effort is aggressive, with the aim of generating patient medical histories, linking individuals to family members, and making staffing and treatment suggestions….as well as identifying opportunities for upcoding and other ways to milk patients.

Google began Project Nightingale in secret last year with St. Louis-based Ascension, a Catholic chain of 2,600 hospitals, doctors’ offices and other facilities, with the data sharing accelerating since summer, according to internal documents.

The data involved in the initiative encompasses lab results, doctor diagnoses and hospitalization records, among other categories, and amounts to a complete health history, including patient names and dates of birth.

Neither patients nor doctors have been notified. At least 150 Google employees already have access to much of the data on tens of millions of patients, according to a person familiar with the matter and the documents.

Ascension’s and Google’s Flagrant Regulatory Violations

Today’s “enter the HHS” development, again from the Journal:

A federal regulator has opened an inquiry into Google and Ascension’s ‘Project Nightingale’ amassing the detailed health information of millions of patients.

The Office for Civil Rights in the Department of Health and Human Services “will seek to learn more information about this mass collection of individuals’ medical records to ensure that HIPAA protections were fully implemented,” office director Roger Severino said in a statement to The Wall Street Journal…

In the past few months, tens of millions of records have been shared with Google, according to the people and documents. The rest are on their way.

The data’s new destination is Google parent Alphabet Inc.’s cloud, essentially data and software storage served and able to be accessed remotely, including by some in the search giant’s employ

A Guardian story today, which included a link to the whistleblower video that we’ve embedded below, has a critically important factoid that makes this HHS investigation a slam dunk should the agency choose to use it:

The deal between Google and Ascension to go ahead with the data transfer was formally signed on Monday, hours after the Wall Street Journal broke the story.

Huh? Substantial amounts of data have already been transferred and worse, Google employees have access to patient data. What were the supervising adults at Google and Ascension smoking? Or did they think that commemorating their understanding would require them to put things in writing that were better not admitted to? Regardless, you can’t do projects of this scale on a wink and a nod. This alone should be reason for firing everyone at Ascension in a decision-making capacity involved in this scheme. That includes their general counsel and CEO; the whistleblower video below states that the project has sponsorship at the “Director, VP, SVP, EVP and CEO levels of Ascension and Google.

Here is the HHS’s commentary (emphasis ours):

The Privacy Rule allows covered providers and health plans to disclose protected health information to these “business associates” if the providers or plans obtain satisfactory assurances that the business associate will use the information only for the purposes for which it was engaged by the covered entity, will safeguard the information from misuse, and will help the covered entity comply with some of the covered entity’s duties under the Privacy Rule. Covered entities may disclose protected health information to an entity in its role as a business associate only to help the covered entity carry out its health care functions – not for the business associate’s independent use or purposes, except as needed for the proper management and administration of the business associate.

General Provision. The Privacy Rule requires that a covered entity obtain satisfactory assurances from its business associate that the business associate will appropriately safeguard the protected health information it receives or creates on behalf of the covered entity. The satisfactory assurances must be in writing, whether in the form of a contract or other agreement between the covered entity and the business associate.

Business Associate Contracts. A covered entity’s contract or other written arrangement with its business associate must contain the elements specified at 45 CFR 164.504(e). For example, the contract must: Describe the permitted and required uses of protected health information by the business associate; Provide that the business associate will not use or further disclose the protected health information other than as permitted or required by the contract or as required by law; and Require the business associate to use appropriate safeguards to prevent a use or disclosure of the protected health information other than as provided for by the contract. Where a covered entity knows of a material breach or violation by the business associate of the contract or agreement, the covered entity is required to take reasonable steps to cure the breach or end the violation, and if such steps are unsuccessful, to terminate the contract or arrangement. If termination of the contract or agreement is not feasible, a covered entity is required to report the problem to the Department of Health and Human Services (HHS) Office for Civil Rights (OCR). Please view our Sample Business Associate Contract.

So what about “contract” did Ascension and Google not understand? Or were all of those “the contract must” provisions, particularly prohibiting the use of the patient information for Google’s own use, something Google was unwilling to commit to? You can see why it was in Google’s interest to continue to play Big Data Hoover and gobble up whatever information on individuals it could get. But what sexual favors were exchanged to get Ascension execs to so flagrantly violate the law and their ethical obligations to patients and doctors?

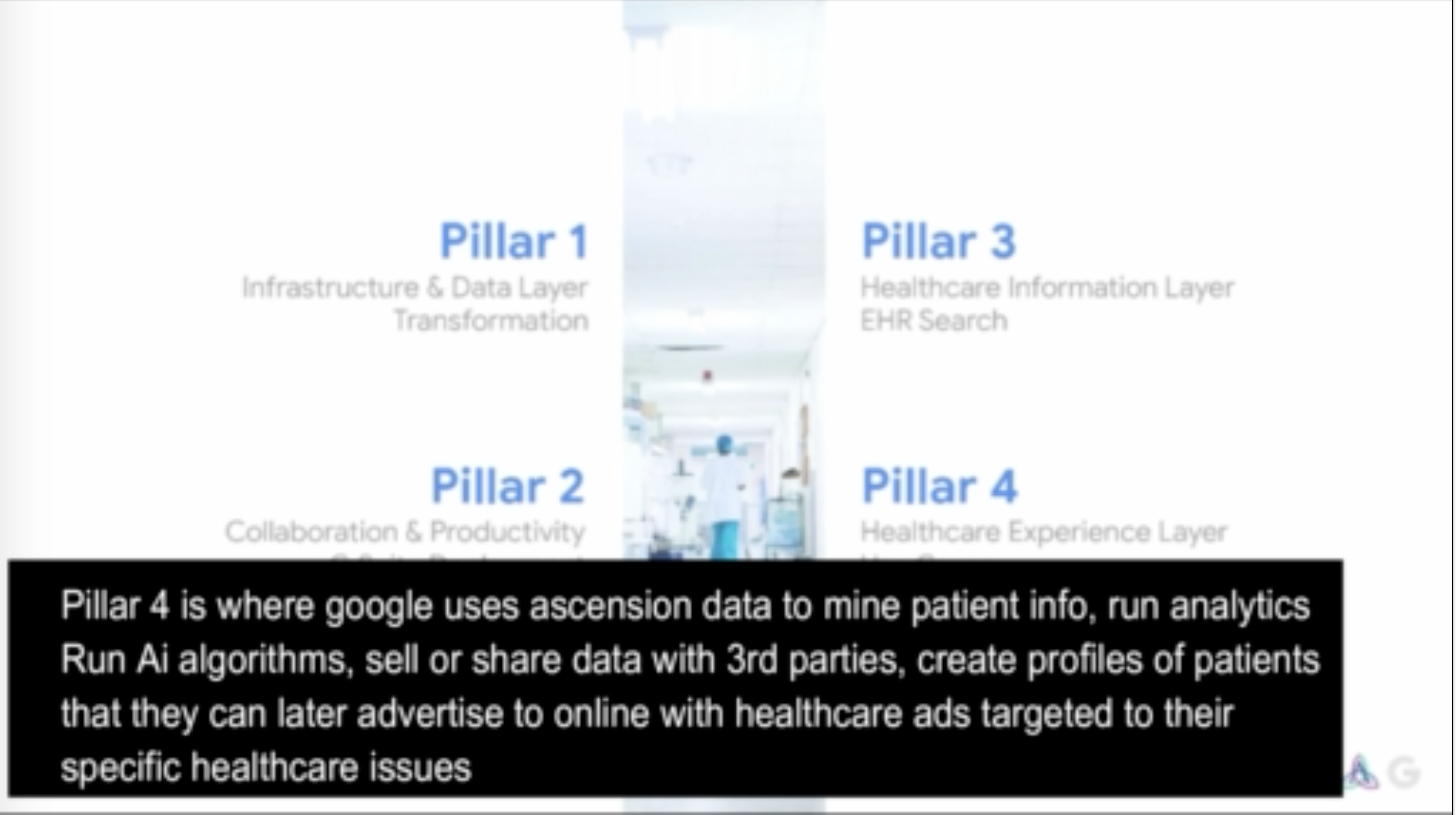

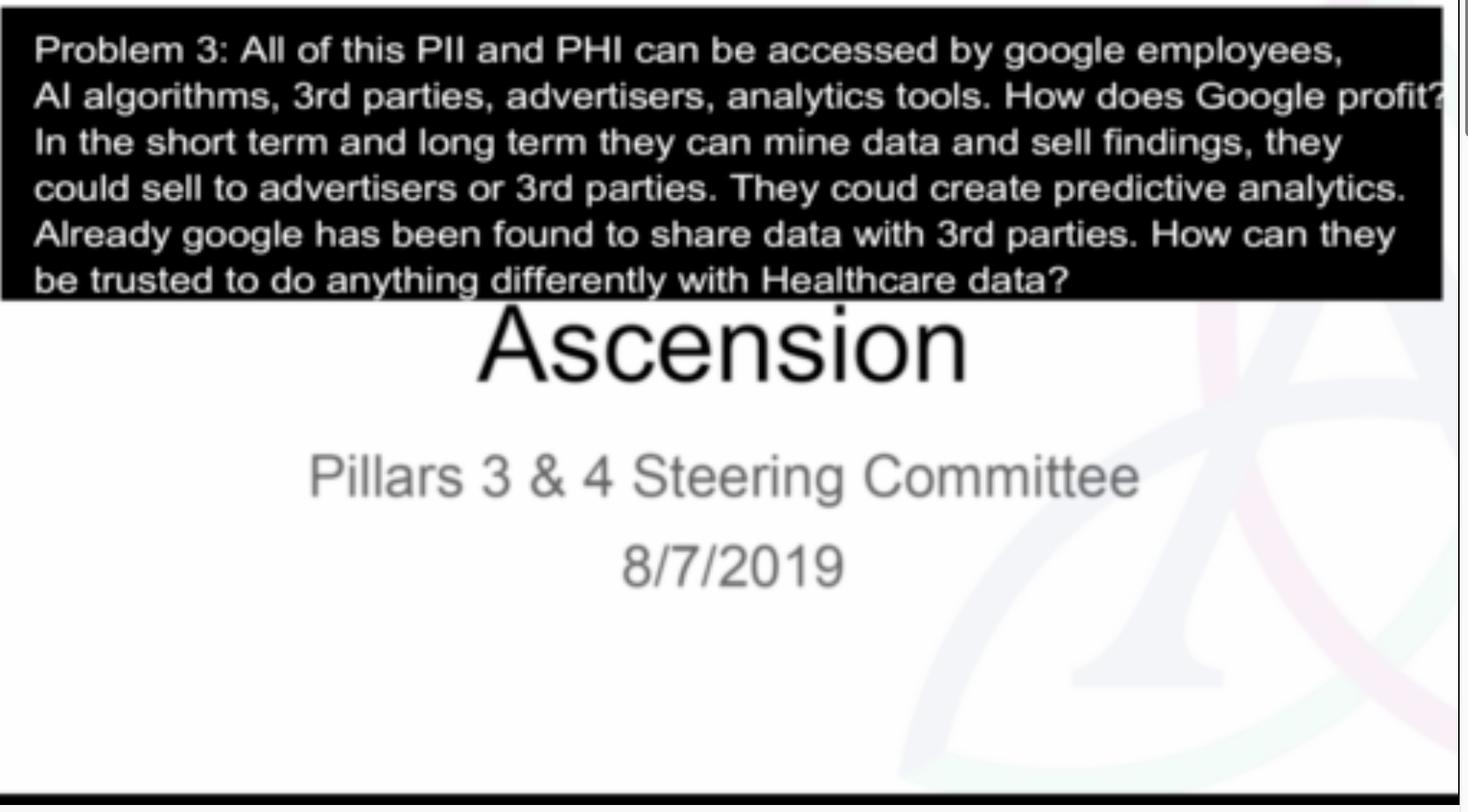

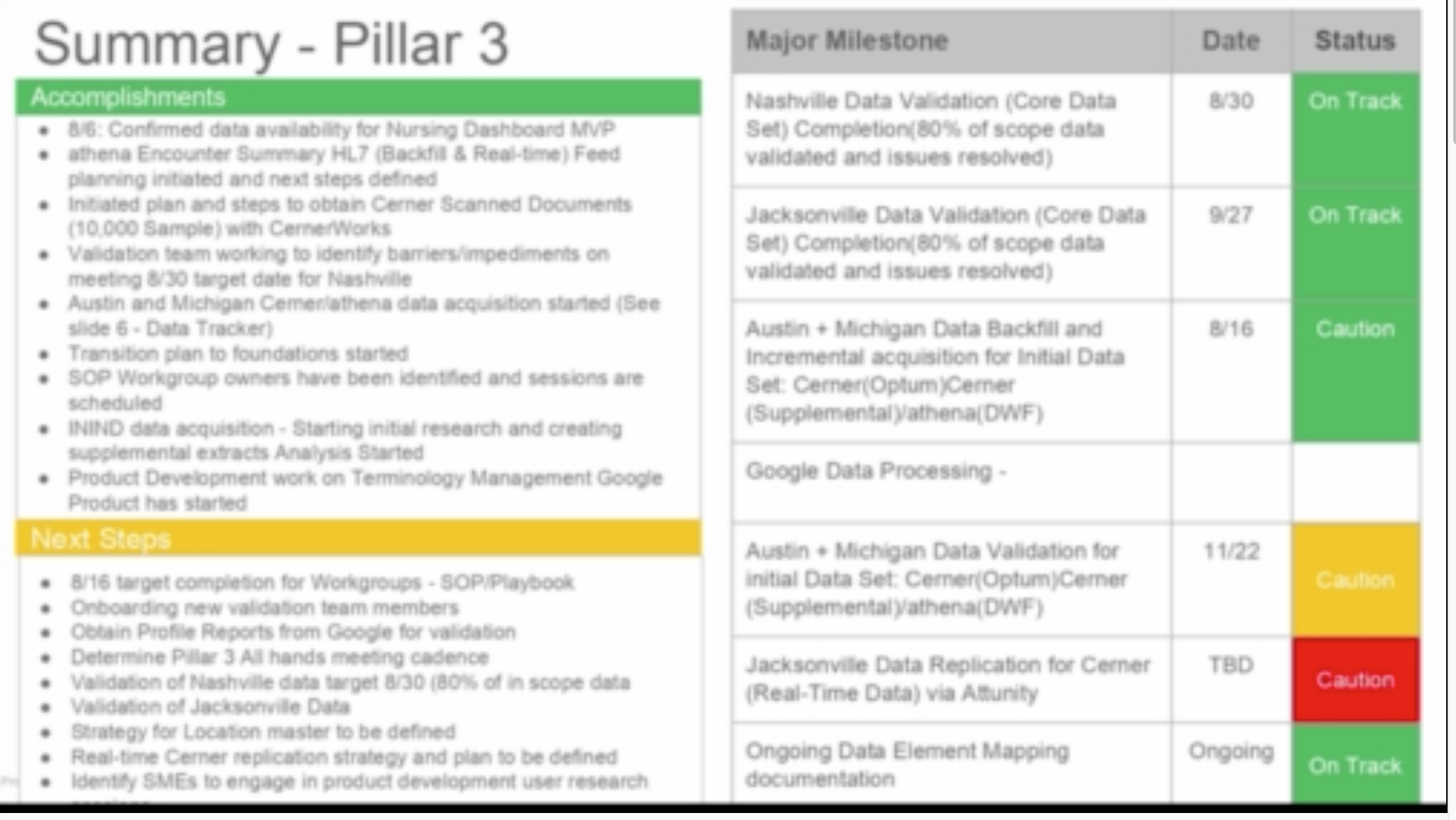

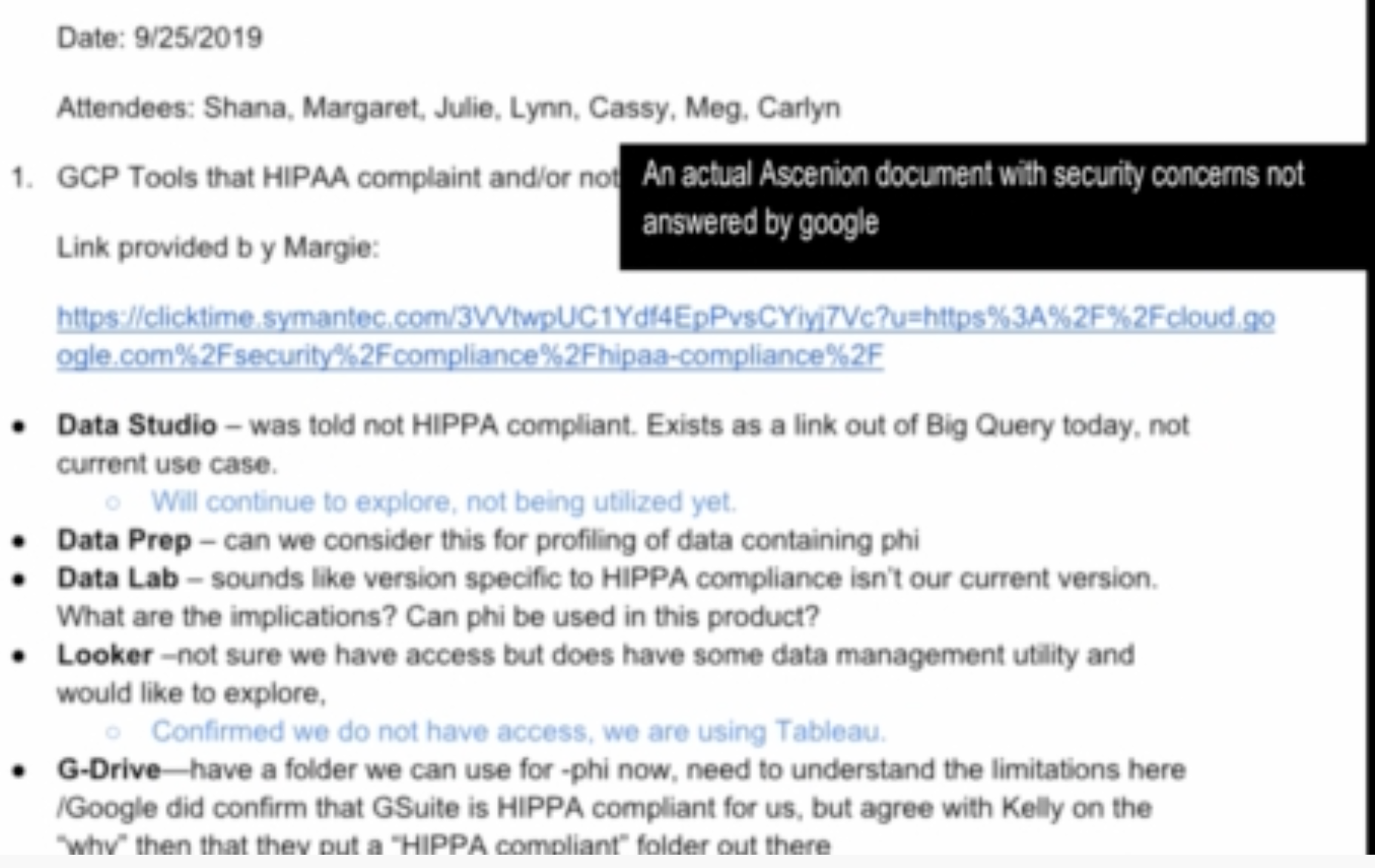

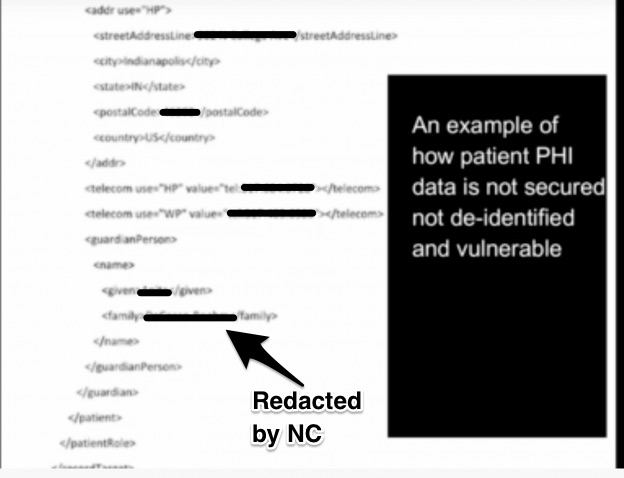

Oh, and aside from the Journal’s own account, which Google and Ascension do not appear to have disputed, the whistleblower has created his own little video with screenshots and commentary on project documents. You can infer that the whistleblower is from Ascension. I can’t get the audio in any of my browsers, but the visuals were enough:

I urge you to watch it in its entirety but here are a few screenshots which clearly demonstrate that Google’s scheme is fundamentally at odds with even America’s not terribly robust patient privacy rules:

Let us underscore: Google monetizing patient data is an absolute no-no. The drafters of HIPAA could never have imagined the horrors that Google would love to inflict on patients, like developing profiles so they can later bombard them with ads targeted to their specific healthcare issues. But you can bet this is exactly the sort of thing they would not tolerate.

The video articulates other concerns, that naked individual patient data has never been stored on the cloud due to security risks and is already being done here, including with patient names and links to test results,1 The Guardian made clear that in Google’s other, smaller medical data projects, the medical data was encrypted, and only the health care organization possessed the keys. Even in supposedly backwards Alabama, e-mailed medical images and test data must be encrypted.

To confirm the almost certainly illegal intent:

The video later describes one Google end product that it intends to build based on Ascension data: “Google Health Search”. Again, HIPAA is clear: “business associate” use of patient data for its own fun and profit is verboten. Worse, the example from Google shows that users input patient names and first see close matches. Anyone authorized to use the system can poke around and look at data not just for the sought-after patient at issue, but clearly the close matches…which strongly suggests anyone who can use this tool can rummage around widely, if not freely, in patient data records. We saw how NSA employees spied on lovers. What is to prevent employees from making malicious personal use of access to this information?

The slideshow also shows the locations where patient data has already been tossed over to Google. Are you a victim?

This is not encouraging:

Nor is this:

Even without the benefit of the ugly details from the Guardian and the whistleblower documents, knowledgeable Wall Street Journal readers were if anything more agitated than when the story broke. And the comments with almost no exception voiced distrust and contempt of Google. For instance:

PATRICK MROWCZYNSKI

Flat out against HIPAA rules.

HIPAA provides for sharing of data that benefits the patient, not the health care provider. Obviously it would be easier to store the information on the cloud (unencrypted, so everyone could get at it) but in this case, the data sharing with a partner does not improve the lot of the patients health nor well-being.

It could of course improve the provider – but so could Google bribing them.

Richard Chupp

My wife and daughter work for a major hospital and they are told constantly how limited they are by HIPAA in what they can say to patients and family members.

This legality interpretation was made by a self interested party.

And they aren’t the only ones unhappy about Google getting its hands on patient data:

Google has secretly mined the health records of tens of millions of Americans to drive up costs to patients. Blatant disregard for privacy, public well-being, & basic norms is now core to Google’s business model. This abuse is beyond shameful. https://t.co/BO41AHyfDa

— Richard Blumenthal (@SenBlumenthal) November 12, 2019

Google & Ascension are collecting millions of patients’ private health data w/out their knowledge. They’re using this data to order more tests and send more bills. Technology should lower costs and help patients, not to find new ways to charge them more.https://t.co/EddxtoFtsi

— U.S. Senator Bill Cassidy, M.D. (@SenBillCassidy) November 12, 2019

Why Skepticism Is Well Warranted

On Twitter and in some comments, once in a while, someone says it would be really nice to have patient records all in one place and Google looks like just the kind of company to do that.

This is deeply wrongheaded thinking.

First, what about taking some personal responsibility and getting copies of your records? How hard it is to get and keep copies of test results? Admittedly, some hospitals are reported to be uncooperative, but even they knuckle under when pushed. One possible upside of this Google abuse is it could fuel a push for hospitals and other medical providers to provide records, not just on request, but as a default, in electronic form (not electronic health records, which would require specialized software to access them, but machine readable PDFs).

Second, there is no reason to think Google will be good at this task. Google is good at advertising. It would probably be good at developing algos to help medical providers upcode, as in charge even more and thus increase medical cost inflation. It would also be good at selling patient data to third parties, as well as scamming.

There is no reason to think Google will be all that good at visualization of medical data, which seems to be a capability it is hyping in its Ascension documents, or more important, that visualization will improve patient outcomes. I hope to get an expert on medical information to weigh in, but Google’s ambitions sound awfully reminiscent of the way Excel visualizations make people stupider or the way people in Corporate America have come to regard spreadsheets as being more real than the scenarios they are attempting to capture. [A cynic might conclude that some developer got their visualization library written into the project requirements. –lambert] A lot of the time it is much better to look at data as data.

Third, and the most important, electronic health records and AI have for decades never lived up to their promise, not even close. If anything, the creation of EHRs has actually led to even more inaccurate patient records.

In other words, Google is embarking on a massive garbage in, garbage out project. Even if it could overcome the ginormous hurdle of combining patient from very diverse original sources (merely correctly identifying unique patients and gathering their data is really hard; banks and retailers struggled with that for a decade and a half and they had SSNs) and getting them into one format, the underlying data is often bad and Google is in no position to clean it up.

From Informatics MD, a health care IT professor and expert witness, at Health Care Renewal:

First and foremost, the focus of this “project” is the hackneyed cybernetic miracle we’ve been promised for decades, the “Artificial Intelligence” that will “revolutionize” medicine.

I view this concept as massively over-hyped and likely fraudulent, an effort to salvage the very same promises made of the entire EMR project on which has been spent hundreds of billions of dollars (more likely beyond the trillion range by now), while waiting for Godot.

Those monies could have been better used to provide world-class healthcare for an entire population, especially considering the lack of evidence of the miracles promised.

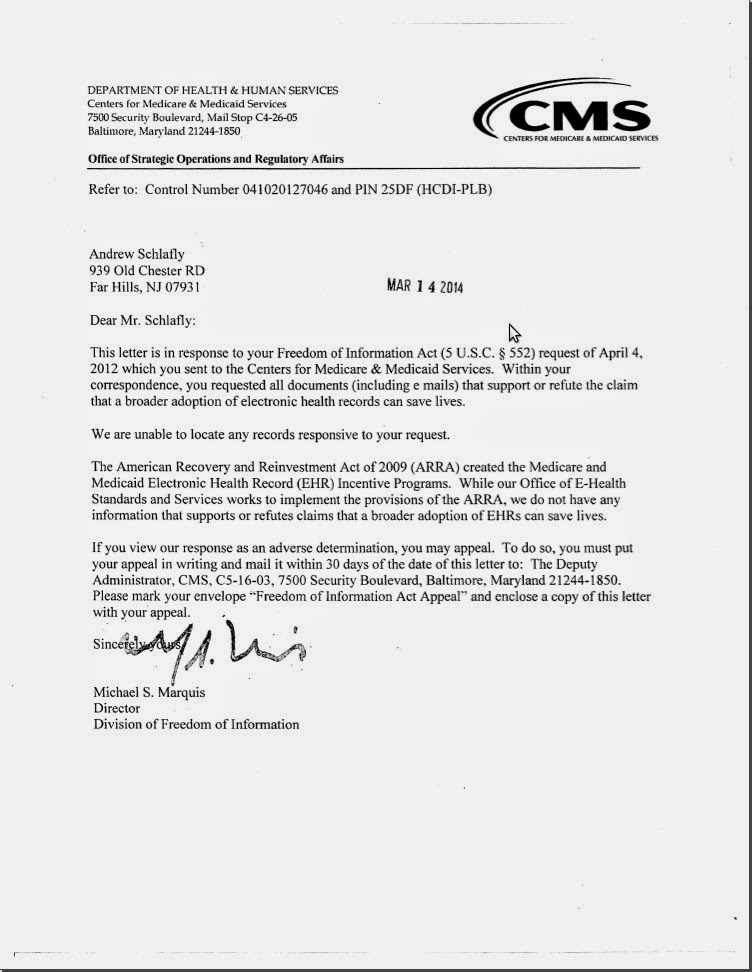

CMS [per embedded document at the end of the post]: “We do not have any information that supports or refutes claims that a broader adoption of EHRs can save lives.”

I have already written that the entire national EMR project is a mass human-subjects experiment without informed consent that can maim or kill patients in many different ways, including clinician distraction and IT error, among others. I also note that as I’ve been involved in litigation support over the past decade, I’ve been exposed to what really happens without the filter of the press and the IT industry. (The verdict in the last case in which I testified about Bad Health IT, for example, went to the deceased plaintiff’s heirs – amounting to more than $16 million; others were in a lower but still multi-million dollar range. Yet, you likely will never read about these in the HIT literature)….

This new development would represent an invitation to massive deliberate or inadvertent abuse, and is likely a massive violation of the HIPAA Privacy Act, despite claims to the contrary.

Just hours after the secret project was revealed, the two companies announced the collaboration in a press release, in which they said the joint project would see Ascension’s data moved onto Google’s Cloud platform.

The statement said the joint project aims to “optimize the health and wellness of individuals and communities and deliver a comprehensive portfolio of digital capabilities that enhance the experienceof Ascension consumers, patients, and clinical providers across the continuum of care.”

More cybernetic miracles promised for the true believers, expressed in the typical IT-magic phraseology. Plenty of profits, too.

Eduardo Conrado, Executive Vice President of Strategy and Innovations at Ascension, said: “As the healthcare environment continues to rapidly evolve, we must transform to better meet the needs and expectations of those we serve as well as our own caregivers and healthcare providers.

The “transformations” needed are to scale back the IT and the bureaucracy that burdens good clinicians and consumes massive amounts of $, and the reduction of waste on worse-than-useless Bad Health IT (http://cci.drexel.edu/faculty/ssilverstein/cases/):

Bad Health ITis IT that is ill-suited to purpose, hard to use, unreliable, loses data or provides incorrect data, is difficult and/or prohibitively expensive to customize to the needs of different medical specialists and subspecialists, causes cognitive overload, slows rather than facilitates users, lacks appropriate alerts, creates the need for hypervigilance (i.e., towards avoiding IT-related mishaps) that increases stress, is lacking in security, compromises patient privacy, lacks evidentiary soundness or otherwise demonstrates suboptimal design and/or implementation.

More corporate mumbo-jumbo:

“Doing that will require the programmatic integrationof new care modelsdelivered through the digital platforms, applications, and services that are part of the everyday experienceof those we serve.”

The partnership will also explore artificial intelligenceand machine learningapplications to help improve clinical quality, and effectiveness, patient safetyand increase consumer and provider satisfaction, according to the statement.

The data collected by today’s EMRs is subject to inaccuracy for multiple reasons mentioned at this blog, including perverse incentives, clinician harassment and cognitive overload, time limitations, forced entry of some data to move further on in the record, and others. Further, the Bad Health IT systems used to collect and display it exposes patients to risk and injury. “AI” will not solve these “issues.”

Tariq Shaukat, President of Google Cloud, added: “Ascension is a leader at increasing patient access to care across all regions and backgrounds, particularly those in disadvantaged communities. We’re proud to partner with them on their digital transformation.

“Digital transformation” is, quite frankly, the same BS as “IT revolutionizing healthcare” that I’d heard since at least the mid-1990s …

More billions of dollars are to be transferred from patient care to the IT industry.

These $ could be far better spent, IMHO, on care delivery, including to the disadvantaged and minorities, and in rethinking the current health IT morass. …

I have passed the newly-released articles on this matter to attorneys with access to the national trial lawyers’ listservs, where the merits of “Project Nightingale” can be considered from the perspective of non-toothless patient’s rights advocates.

FINALLY:

I believe invasive healthcare data trafficking projects like this, with potential for massive abuses, provide reasonable justification for patients to REFUSE the use of EHR’s in their care. Paper works just fine. In fact, when the IT goes down, it’s what hospitals and doctors go right back to, and the PR always claims that “patient care was not compromised.”

As Lambert summed it up:

Holy crap, Google is going to merge private medical data records with its existing data and sell ads off it.

1) Putting technical issues like merging is hard because data is bad aside….

2) Google apparently thinks its data is good enough so that they CAN or more plausibly CLAIM THEY CAN can target INDIVIDUAL searchers with INDIVIDUAL medical records. I mean, isn’t that what the words say?

3) which is so evil I can’t even. You go in for a cancer test, so Google targets you at work with cancer ads, and your work sells your browser data to a data broker and the data broker sells it to a health insurance company which then fucks your policy up so when you get a diagnosis you end up going bankrupt and all because you did the right thing to begin with and got tested.

Leave it to Google to automate adverse selection!

Basic idea is that whatever you do at your doctor’s bleeds through to your browser on any page that Google helps build.

The only upside of this sorry situation is that Google and Ascension have gone so far over the line that there’s a good chance that their overreach will elicit a tightening of laws guaranteeing patient privacy and control over their medical records.

___

1 While it is difficult to prove a negative, the whistleblower’s claim seems likely to be accurate, that no large health care organization puts naked patient data in the cloud.

This blatant violation of HIPAA laws is a continuation of a theme of large entities (banks, manufacturers, tech companies, etc) begging for forgiveness instead of following the rule of law. Google and Ascension can likely expect protracted legal procedures, government fines, and awarded damages. But what they know will never happen is actual criminal prosecution for their violations. It’s good business to break the law and later pay a small fine. As Yves says, every senior decision maker at Ascension should be fired, but will they be dismissed? Not very likely.

The one thing I will say though is that this adds another massive log onto the building fire of political will to regulate the tech industry.

Cambridge Analytica becomes Cupertino Analytica, which rolls off the tongue better than Mountain View Analytica, and sounds more ominous than Sunnyvale Analytica ;)

A fine of a million bucks per byte of illegal data could smarten tech wasteland up. Additionally, a couple or three decades of prison for the executives involved from both organizations would work wonders too.

> Google is good at advertising.

You forgot to add the word, fraud.

You picked up on the same thing. I would amend it to ‘Google is good at pretending to be good at advertising and then grossly overcharging for it.’

Time to break this behemoth up.

They have a de facto duopoly with Facebook estimated at 60% of internet advertising between them (all the other competitors are so small and fragmented that it would be difficult to advertise a major brand without the Big 2), so they don’t have to prove they’re better than the next guy, just that they’re better than nothing at all.

I did also say “scamming”.

Google will get away with it BECAUSE they know everyone’s secrets, including the secrets of HHS bureaucrats.

I am not amused.

So, if we had a single care-based system available to all, cradle-to-grave single-payor reimbursed regulated health care, none of this would matter.

In fact, it would be imperative that all the information be available to all caregivers.

If you stub your toe on concrete and rebar in the surf in Florida, get hit while biking in Sedona by an octogenarian, or need to get fixed after a work related accident in Powell, Wyoming, wherever you are, that care giver gets your info and treats you in a competent, compassionate, professional workmanlike way.

Why do we allow this to be so frigging hard?

Why do we allow this to be so frigging hard?

Because there is so much profit form not doing it (Cpasys, fees, dual billing, and so on)

The Ascension-Google affair must be raising eyebrows in EU corridors and I think it is no coincidence that Merkel was calling yesterday for increased digital sovereignity in the EU. Later Knops, state secretary in the Netherlands tried to downplay this as ‘not a digital war‘

Class action lawsuit, anyone???

I’m in

I can just picture the “ambulance chasers” jumping all over this.

The law firm TV commercials that now start with “Have you been injured in a car accident?” will be changed to “Have you been treated at an Ascension medical facility?”

That sounds so quaint. This abuse brings to mind Hillary’s deliberate mishandling of classified information. I don’t recall her getting her clearance yanked and prosecuted for that. Which naturally made everyone with a clearance feel that the whole system had a double standard, if not being a joke. I’m wondering if a similar double standard will apply to Google.

I’m wondering if the real reason that this is finally getting traction is that so many people have their personal medical records with Ascension, including senior staff of the Department of Health and Human Services. That makes it personal.

IBM Creates Watson Health to Analyze Medical Data

Also note that Ascension is a bad actor in healthcare generally, refusing to offer basic reproductive health services or to properly treat the transgender.

I sure would like to know exactly what they are doing here.

http://fortune.com/2016/02/18/ibm-truven-health-acquisition/

http://www.cio.com/article/2970229/big-data/ibm-s-insatiable-appetite-for-healthcare-data.html

The “grand scheme of things”? What is the grand scheme?

IBM Positions itself as large broker of health data

https://www.wsj.com/articles/ibm-positions-itself-as-large-broker-of-health-data-1428961227 (behind paywall)

Sorry…forgot link.

Here it is:

https://www.propublica.org/article/health-insurers-are-vacuuming-up-details-about-you-and-it-could-raise-your-rates

Anytime you use ANY Google service, you are handing over cross correlated details of your life to them. Get wise, or get used, exposed, life hacked and stripped of your constitutional rights.

Check out this list:

https://developers.google.com/products/

“the search engine bots that Google programmed to continuously scour the web for new online content are probably also the retooled spider data miners crawling the content of Gmail accounts. Using any free web service or mobile app made by Google comes with the caveat that you are surrendering a part (or should I say total?) of your personal privacy.”

“One example of this rewarding scanning activity is that Google probably was able to learn of your personal credit card numbers through the monthly credit card electronic billing statements you get through Gmail, or through Google Wallet, and Google Play Store enrollment. Google’s offer to advertisers to track/share offline spending of credit card holders who watched their online ads is just one example of how deeply knowledgeable Google is about people habits and activities.”

https://seekingalpha.com/article/4088241-gmail-popular-google-still-fix-security-vulnerability

Use Firefox, and flush every cookie at the end of your session. Send money to N.C., you’ll feel better about this.

https://restoreprivacy.com/firefox-privacy/

I just sent out emails this morning to everyone of my contacts who use gmail and told them i will not be sending or receiving emails from them unless they open a Tutanota, Protonmail, or Fastmail account.

#BoycottGoogle

I am at a loss to understand why anyone would get their credit card statement via Gmail. However, credit card companies make a point of not providing your credit card number in your statement as a fraud prevention measure. But I wouldn’t like letting Google see who my transactions are with. Goes double for Google Pay.

The Financial Times reports this:

Google in talks to move into banking. … Google’s new banking effort, code-named Cache, is the $900bn company’s latest attempt to crack the personal finance industry

https://www.ft.com/content/7c4eb71c-0610-11ea-a984-fbbacad9e7dd

Here’s a link without a firewall.

*Sigh*. This is not “getting into banking”. This is a co-branded product. They’ve been around for decades. Citibank is the bank, Google is just providing a front end and taking a cut.

Hmmm.

Google vows not to sell its checking account users’ financial data … ‘Our approach is going to be to partner deeply with banks and the financial system,’ Caesar Sengupta, general manager and vice-president of payments at Google, told the Journal in an interview.

First to “partner deeply with banks and the financial system,” then to replace them? I think “getting into banking” is surely one of Google’s prime motivations. Admittedly, this is more of a front end plus cut thing, but it’s a way into the sector, and I’m sure Google will see it that way. That may be exactly why they aren’t going to sell our data – they don’t want to disqualify themselves from future growth in this sector, but they aren’t vowing not to use our data. And I can’t imagine them not using it.

Who’d have imagined Amazon would morph from an online bookseller into what it is today?

No, this is just a combination of Google hype and lazy reporting.

Banks are not permitted to be in commerce. Google would have to quit being Google to become a bank. This is an absolute bright line in regulations.

Moreover, being regulated as a bank sucks. No one sensible would want to get into that business, particularly on the low-margin retail end. Google would have much more fun and profit being a PE or VC fund. Only a little SEC oversight.

This story was so clearly overhyped that Clive was unwilling to waste his energy on an entire post debunking it. As he said via e-mail:

The phrase “to partner deeply” is not splitting an infinitive – it is avoiding the splitting of an infinitive.

I was just relaying a link, I didn’t note that they were getting into banking (as if a bank); neither did the Daily Mail article (as it was written when I linked to it). My concern would be Google’s undoubted access to Bank Account numbers, names and addresses, etcetera.

I think Sergey and Larry have considered themselves beyond the law since federally subsidized at Stanford (oh and love that Garage Story). After its state of domicile, California, allowed Google to break California’s own laws with Gmail in 2004 (which certainly wouldn’t be the first time California allowed powerful business industries to break its own laws (e.g. Skilled Nursing Rehabs/ Nursing Homes, speaking of Health and Human Services Agency oversight); and, later that year, the CIA handed them Keyhole (Google Earth now) on a platter, it was all over. Google has been acting – with impunity, and sans punishment – as if it’s a large and powerful arm of the Executive Office since its inception.

“The drafters of HIPAA could never have imagined the horrors that Google would love to inflict on patients, like developing profiles so they can later bombard them with ads targeted to their specific healthcare issues.”

I think the result would be even worse, because healthcare professionals are the ones most responsible for choosing which treatments a patient purchases. Imagine if one day drug companies ( and distributors ) could pay Google to screen everyones heath records, and search for symptoms which qualify for their drugs as treatment. While this may initially sound beneficial or benign, every drug company has a requirement for revenue growth which might lead them to loosen their screening criteria each year just to get a few more customers. The drug companies could then target monetary incentives to every doctor treating these screened patients, if they consider these loosened criteria and prescribe their medications as treatment. So a corporate ethics failure like the opioid epidemic, which most corrupted health professionals in concentrated geographic locations, could essentially become even more catastrophic and effect almost every patient in this country with a pain diagnosis.

I hope this issue is dealt with before it gets out of control.

At the same time as Google is trying to get hold of our health records, the EPA would like to ignore them.

A new draft of the Environmental Protection Agency proposal, titled Strengthening Transparency in Regulatory Science, would require that scientists disclose all of their raw data, including confidential medical records, before the agency could consider an academic study’s conclusions. E.P.A. officials called the plan a step toward transparency and said the disclosure of raw data would allow conclusions to be verified independently.

The measure would make it more difficult to enact new clean air and water rules because many studies detailing the links between pollution and disease rely on personal health information gathered under confidentiality agreements. And, unlike a version of the proposal that surfaced in early 2018, this one could apply retroactively to public health regulations already in place.

Public health experts warned that studies that have been used for decades — to show, for example, that mercury from power plants impairs brain development, or that lead in paint dust is tied to behavioral disorders in children — might be inadmissible when existing regulations come up for renewal.

E.P.A. to Limit Science Used to Write Public Health Rules

https://www.nytimes.com/2019/11/11/climate/epa-science-trump.html

HIPPA is a strange beast. There is only one (1) punishment for any violation of HIPPA–size matters not. Neither Google nor Ascension will be fined. The only punishment they will receive is to be declared in “noncompliance.” But “noncompliance” is the real reason anyone and everyone in healthcare is cautious to the point of paranoia.

No HIPPA compliant person or organization can do business (in any way) with a “noncompliant” business or organization. Otherwise, they will be declared “noncompliant,” too. No appeal process nor recertification process exists.

What this would mean for a doctor or a nurse: They would need to be fired immediately, and that they would never be able to work in a hospital, clinic, or private practice, again. Nor could they receive insurance payments from a HIPPA compliant insurance agency. But this chain of “noncompliance” creates massive ripples for an organization of any significant size.

I would expect all of the health insurers are holding their breath and all payments to Ascension hospitals until the HHS decides if they will declare Ascension in “noncompliance” or not. If they do, the health insurers will immediately demand all their money back (as far back as the laws will allow and maybe further for good measure). Additionally, all the medical equipment manufacturers will back-order any orders which haven’t been shipped–just in case. Everyone who provides ancillary services to Ascension needs to be terminating their contracts be they the records database company, the ambulance company, the cleaning company, the telephone service, et al. And technically, all their employees need to be turning in resignations and running for the exits, because their careers might be over without them knowing it. Any college with a nursing clinical or physician residency program needs to cut all ties with them as well.

In short, Ascension is dead.

And Google would not escape unscathed. No hospital, no clinic, no nurse, no therapist, no doctor, no employee at a medical facility would be allowed to use anything produced by Google or any service they might offer. Even having an Android cellphone might become a HIPPA violation. Imagine a world where someone who wants to enter the healthcare profession needs to drop Gmail, Chrome, Google Search, Google Docs, et al. A new interview question might need to be asked: “Do you use, or have you ever used, Google?”

Since no one has ever been so STUPID as to test the limits of HIPPA, there is no way to know the real extent of the punishment clause. For the last 15 years, every professional who has read that very simple clause has run screaming from the slightest appearance of a violation, BECAUSE even a tiny violation meant DEATH BY HIPPA. Even if battery and bodily harm are committed against a nurse or doctor, hospitals will not allow the harmed parties to testify. BECAUSE OF HIPPA. Many district attorneys have just surrendered on prosecuting crimes that happen in a hospital or happen to a nurse or doctor.

If the HHS doesn’t declare Ascension and Google to be in “noncompliance,” HIPPA is dead. At the same time, HHS cannot kill off Ascension and Google due to money in politics. Either way, this stupid agreement between Ascension and Google will change the face of healthcare.

Now, I need to go change my entire family’s lives to make sure Google is no longer it. Does anyone have a replacement for YouTube?

While it is great to learn that HHS has a nuclear weapon, in fact, as we wrote earlier, UCLA did pay a fine (or perhaps it was characterized as a settlement) for HIPAA violations. However, if Ascension is stupid enough not to roll over, cancel the project, and apologize profusely (and they don’t appear to be making any of the usual PR crisis responses), executive heads will eventually roll. Getting the CEO fired would send a big message.

As I stated above, HIPAA is a strange beast. Yes, the HHS lists the $865,500 as a “resolution agreement” with UCLA.

When it comes to HIPAA enforcement, everything falls upon the “compliance officer” inside the individual organization. Whatever this person says goes. As a guess, the UCLA compliance officer gave an arbitrary amount to “resolve” the issues of their employees inappropriately accessing medical records, and the HHS agreed. Since the affected individuals were two celebrities, the amount had to be high enough to appear appropriate, but low enough not to overly punish for “lack of understanding” and missed “vital training” for the UCLA employees. Then HHS smiled and made a press release.

In the first years of HIPAA, almost everyone (in this geographic area) was making HIPAA violations. The agency I worked for at the time held training seminars for the local clinics and hospitals every time we saw a problem. Given the lack of understanding by everyone with whom we had contracts (including UCSF), I am unsurprised by the lack of “vital training” and “understanding” even as late as 2008–the date range listed in the resolution agreement.

But, now, we have over fifteen years of HIPAA.

So, strange beast problem #2: HIPAA was never given to a department to enforce. The law is entirely self-enforced by hospitals, doctors, nurses, insurers, et al.

What Ascension is hoping: HHS accepts the lack of HIPAA regulatory agency and allows their in-house “compliance officer” to claim that no violation took place.

But, with the UCLA resolution agreement, OCR of the HHS has declared itself to be the HIPAA enforcement agency. More problematic is that Ascension needed its compliance officer to sign-off on the data sharing before the first byte was sent to Google. If the compliance officer’s signature is missing from the new Google-Ascension agreement, the agreement still isn’t HIPAA compliant. Unless Google has a HIPAA compliance officer, they cannot legally enter into an agreement with Ascension. Regardless, the first hurdle Ascension needs to clear is their own compliance officer; if compliance officer fears the violation is too great, they are legally bound to nuke Ascension. But, since they work for Ascension … (what is a little extortion between employer and employee?)

Of course, if the compliance officer errors on the side of Ascension, the whole mess can end up in the courts, and the only penalty allowed is death to Ascension. So, it really is in their best interest to settle and settle high (really high). But if any of the patients take Ascension to court (or any of the state AGs), Ascension would still be looking at death by board stupidity.

I imagine that Google is just now learning about HIPAA and the damage that could head their way. I doubt their response will change much in comparison to other legal filings. The only difference is everyone around them needs to abandon them and lock them out, and that might make the difference.

If anyone who wants to kill off the tech giants gets elected next year, Google already grabbed their blindfold.

I’m guessing the link was to a YouTube video by the whistleblower?

When the platform on which the whistle is blown is owned by the company on which it was blown, we get this:

“This video has been removed due to a breach of the Terms of Use.”

Good to know that Google values its privacy.