Yves here. I’m really put off by the premise of this post, both by virtue using Twitter, as well as having my e-mail inbox full of spam. I get put on junk publicist/PR/political lists almost daily, and even when I unsubscribe, they don’t always honor the request. I’m similarly unhappy about junk in my Twitter feeds and junk in the comments to the tweets where I read the conversation.

It’s revealing, and not in a good way, that the authors don’t even attempt to define bots and instead talk around the issue. Well, I can. A bot is a non-human tweet generator. See, that wasn’t hard.

Bots on Twitter are used overwhelmingly to manipulate viewers and/or to serve as unpaid advertising. The only “good” bots I can think of (and Lambert informed me of their existence) are art bots and animal bots, which put out new images automagically on a regular basis, and only Twitter users who subscribe to them get them. The transparency and opting-in make them acceptable. The rest need to go.

I’m appalled that these authors nevertheless attempts to defend bots by trying to identify “manipulative” bots as the evil ones. Sorry, as Edward Bernays described in his classic Propaganda, promoting your story is manipulation. The PMC likes to think their manipulation as “informative” and therefore desirable.

By Kai-Cheng Yang, Doctoral Student in Informatics, Indiana University and Filippo Menczer, Professor of Informatics and Computer Science, Indiana University. Originally published at The Conversation

Twitter reports that fewer than 5% of accounts are fakes or spammers, commonly referred to as “bots.” Since his offer to buy Twitter was accepted, Elon Musk has repeatedly questioned these estimates, even dismissing Chief Executive Officer Parag Agrawal’s public response.

Later, Musk put the deal on hold and demanded more proof.

So why are people arguing about the percentage of bot accounts on Twitter?

As the creators of Botometer, a widely used bot detection tool, our group at the Indiana University Observatory on Social Mediahas been studying inauthentic accounts and manipulation on social media for over a decade. We brought the concept of the “social bot” to the foreground and first estimated their prevalence on Twitter in 2017.

Based on our knowledge and experience, we believe that estimating the percentage of bots on Twitter has become a very difficult task, and debating the accuracy of the estimate might be missing the point. Here is why.

What, Exactly, Is a Bot?

To measure the prevalence of problematic accounts on Twitter, a clear definition of the targets is necessary. Common terms such as “fake accounts,” “spam accounts” and “bots” are used interchangeably, but they have different meanings. Fake or false accounts are those that impersonate people. Accounts that mass-produce unsolicited promotional content are defined as spammers. Bots, on the other hand, are accounts controlled in part by software; they may post content or carry out simple interactions, like retweeting, automatically.

These types of accounts often overlap. For instance, you can create a bot that impersonates a human to post spam automatically. Such an account is simultaneously a bot, a spammer and a fake. But not every fake account is a bot or a spammer, and vice versa. Coming up with an estimate without a clear definition only yields misleading results.

Defining and distinguishing account types can also inform proper interventions. Fake and spam accounts degrade the online environment and violate platform policy. Malicious bots are used to spread misinformation, inflate popularity, exacerbate conflict through negative and inflammatory content, manipulate opinions, influence elections, conduct financial fraud and disrupt communication. However, some bots can be harmless or even useful, for example by helping disseminate news, delivering disaster alerts and conducting research.

Simply banning all bots is not in the best interest of social media users.

For simplicity, researchers use the term “inauthentic accounts” to refer to the collection of fake accounts, spammers and malicious bots. This is also the definition Twitter appears to be using. However, it is unclear what Musk has in mind.

Hard to Count

Even when a consensus is reached on a definition, there are still technical challenges to estimating prevalence.

External researchers do not have access to the same data as Twitter, such as IP addresses and phone numbers. This hinders the public’s ability to identify inauthentic accounts. But even Twitter acknowledges that the actual number of inauthentic accounts could be higher than it has estimated, because detection is challenging.

Inauthentic accounts evolve and develop new tactics to evade detection. For example, some fake accounts use AI-generated faces as their profiles. These faces can be indistinguishable from real ones, even to humans. Identifying such accounts is hard and requires new technologies.

Another difficulty is posed by coordinated accounts that appear to be normal individually but act so similarly to each other that they are almost certainly controlled by a single entity. Yet they are like needles in the haystack of hundreds of millions of daily tweets.

Finally, inauthentic accounts can evade detection by techniques like swapping handles or automatically posting and deleting large volumes of content.

The distinction between inauthentic and genuine accounts gets more and more blurry. Accounts can be hacked, bought or rented, and some users “donate” their credentials to organizations who post on their behalf. As a result, so-called “cyborg” accounts are controlled by both algorithms and humans. Similarly, spammers sometimes post legitimate content to obscure their activity.

We have observed a broad spectrum of behaviors mixing the characteristics of bots and people. Estimating the prevalence of inauthentic accounts requires applying a simplistic binary classification: authentic or inauthentic account. No matter where the line is drawn, mistakes are inevitable.

Missing the Big Picture

The focus of the recent debate on estimating the number of Twitter bots oversimplifies the issue and misses the point of quantifying the harm of online abuse and manipulation by inauthentic accounts.

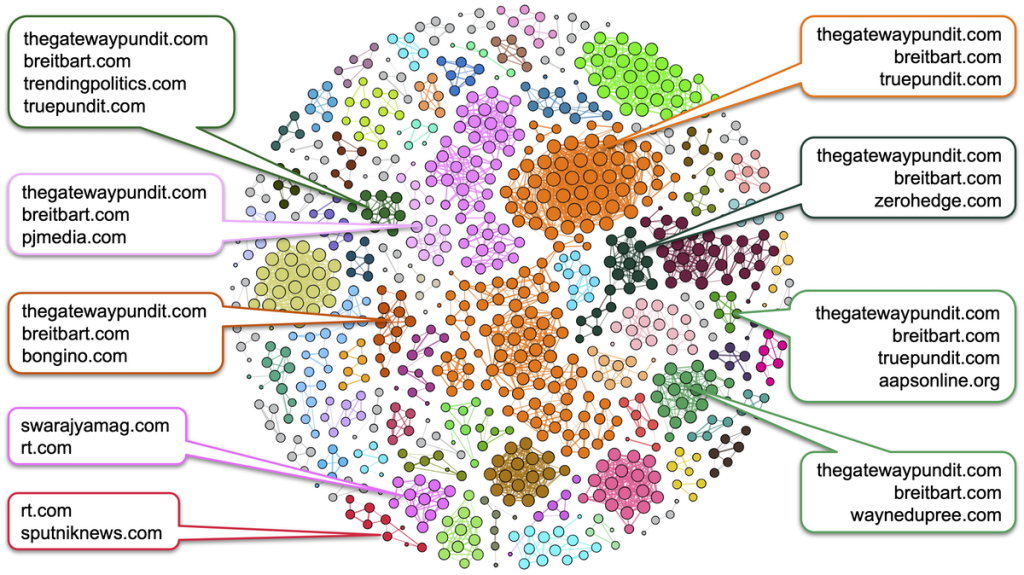

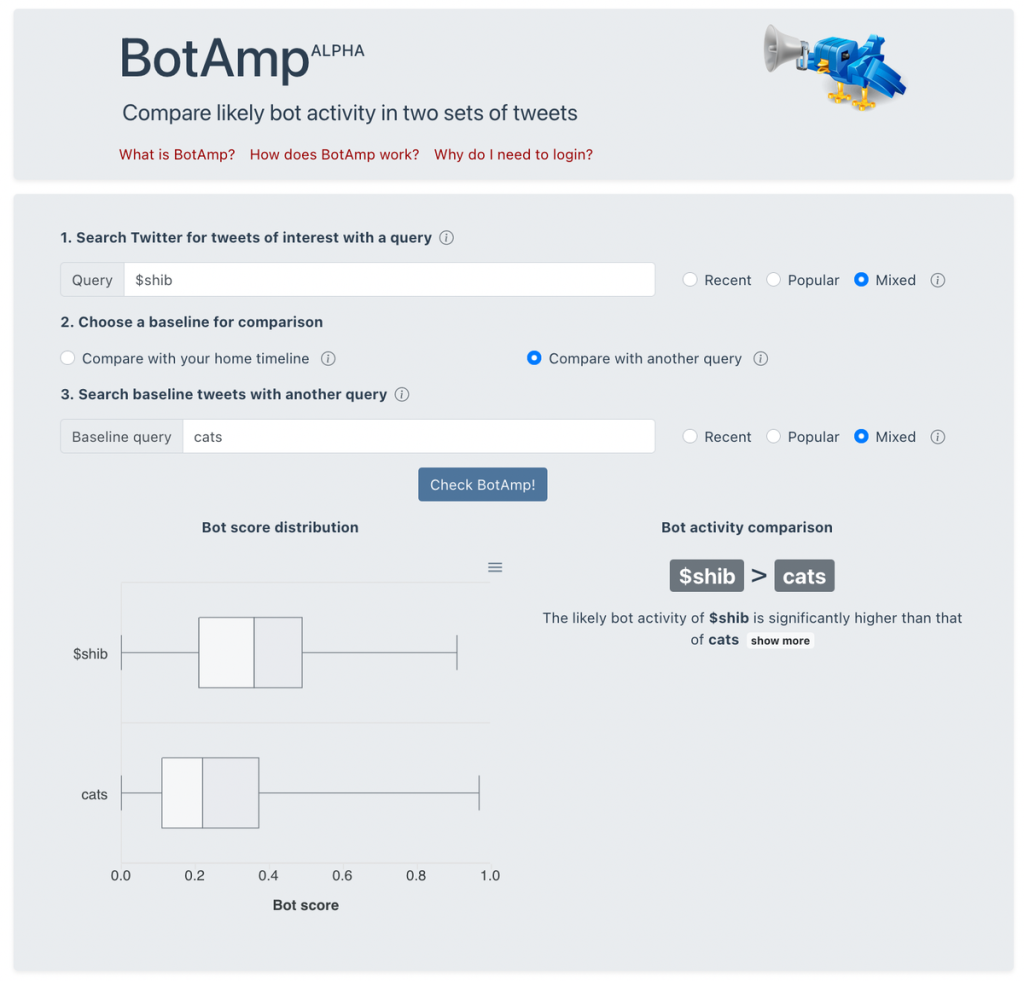

Through BotAmp, a new tool from the Botometer family that anyone with a Twitter account can use, we have found that the presence of automated activity is not evenly distributed. For instance, the discussion about cryptocurrencies tends to show more bot activity than the discussion about cats. Therefore, whether the overall prevalence is 5% or 20% makes little difference to individual users; their experiences with these accounts depend on whom they follow and the topics they care about.

Recent evidence suggests that inauthentic accounts might not be the only culprits responsible for the spread of misinformation, hate speech, polarization and radicalization. These issues typically involve many human users. For instance, our analysis shows that misinformation about COVID-19 was disseminated overtly on both Twitter and Facebook by verified, high-profile accounts.

Even if it were possible to precisely estimate the prevalence of inauthentic accounts, this would do little to solve these problems. A meaningful first step would be to acknowledge the complex nature of these issues. This will help social media platforms and policymakers develop meaningful responses.

How about defining a “bot” as a “profit-motivated tweet generator”. That has the advantage of including Kai-Cheng Yang.

For pay poster, aka influencer, aka bot.

Me like.

‘Wet’bot for Terran human “curated content” posters, and ‘Dry’bot for artificial, or, more specifically, non Terran human, “curated content” posters.

Unfortunately, that lets pass all the political spam and firestarting, which is the real problem.

I suppose I could make a case for “profiting from ‘social’ capital”, but I take your point. Else we’d have to confer bothood on most of humanity.

Thanks for this. As often, Yves’ intro provides critical insight and addition to what is left unsaid in the piece. I was also annoyed by the inability to give a clear definition of a bot since it is a standard thing hardware people like me know about, but then I noticed the authors are informatics academics. Let me add a little color to the discussion by explaining what informatics is and why they cannot describe the actual problem (much less fix it).

What normal humans think of as computers are created and operated by at least 5 distinct types of workers: hardware, software, data, user interface/presentation, and support. Since the advent of the cloud era about a decade ago, the divisions have compressed further and further so that as many types as possible can be classified as either “engineering” or … not. The reason for that is compensation brackets. “Informatics” is the attempt to classify tasks in the data and UI divisions as engineering roles to justify and make relevant a very expensive college degree for one of the only industries that has a real path to promotion via on-the-job learning.

The writers of this piece are coming from the academic (not real world/operational) perspective of data and user interface/presentation layer workers. They very literally cannot see that a bot is simply a non-human tweet generator because they don’t care about the non-human aspect of it. They care about which model in the data layer it belongs in (so classification taxonomy/tags/metadata – “how do we describe it”) and how it should be seen by the user of the software. In fact the first leads to the second, that is why this entire piece is defending their taxonomy groupings as being relevant to the problem at hand.

In social psychology classic The Thick of It, there is a scene where a group of distressed and stupid MP’s are harassing a civil service lifer about how they should present some unpalatable policy to a reporter. The civil service worker is like, I don’t care, they’re apples or oranges, if you tell me to sell the apples I’ll sell the apples, and if you say oranges then by god these are the best oranges you’ve ever tasted. That is effectively the role these academics have trained for. Non-human-generated content is a product problem and upstream of their work.

As someone who has worked on the “hardware” and “support” sides of computers, high speed (satcom) data communications, CNC machine tools and High Performance Computing Platforms for over 40 years, your explanation is the best I’ve seen yet regarding the basic work slots.

For me it is simple, if it ain’t a human being at the keyboard, it’s a bot. Making it more complicated than that seems foolish to me,… other than generating paychecks for “informatics” specialists and software writers, of course.

The writers may be surprised to learn that they fit into the linked list!

10 secret code words that IT folks use

Sadly ever since “Russiagate”, bots often also incorporate human powered propaganda efforts.

Even before Russia invaded, trying to be more nuanced than Putin==Hitler==SATAN on social media would invariably get you branded as a “Russian bot” for the effort.

for added fun you would often get labeled both communist and fascist as well, usually from people trying to claim Nazis were actually socialists.

Maybe Twitter would benefit from more bots:

1) I’ve seen insanely stupid tweets from blue check folks who are presumably not bots, and it’s hard to imagine bots coming up with dumber ideas.

2) Perhaps we could reach a steady state where bots chat with each other on Twitter and humans have face-to-face conversations in the real world.

Would making Twitter fee based ($1.00 per word) address] the problem?

I think we would see the opposite effect. The “big” political social ‘bot farms’ are backed by wealthy individuals and corporations. They can afford the fees, while ‘legitimate’ individuals cannot. We would end up with a digital enclosure movement.

But, day, a system where if you send actual verification that your account is real human your posts are free, and accounts who are not willing to verify themselves have to pay, might be an interesting idea.

It would be an interesting experiment, but the potential for abuse will reside in the methods utilized for verification of the poster’s status. I see it as another ‘nudge’ towards ubiquitous biometric data collection. Who knows what mischeif ‘they’ could get up to with all of that data?

“I’m appalled that these authors nevertheless attempts to defend bots by trying to identify “manipulative” bots as the evil ones.”

You’re right to be appalled… I was thinking at least in that article they’d give us a number/percentage of the users who are bots, before attempting to hand wave it away, instead we only get this?

Is it 20%? What’s the estimated number? I wouldn’t be surprised if it was higher (whether they take into account inactive bots or not). Sure they may coelesce around certain topics, but it’s still helpful to know how many there are & set your expectations accordingly.

They also seem to be giving twitter itself a pass here for the bot activity… as if twitter bots would exist outside the network. I had to look this up, but I remember Sally Albright STILL tweeting under her own name/handle long after HuffPo outed her for running her own bot network in support of Hillary. That was public knowledge in March 2018, and according to the GrayZone her account wasn’t suspended until September 2020.

How serious can twitter be about policing bots, when they let things like that slide for years?

There is a huge distinction between automatically posting to an account you own and comment spamming.

What Twitter should do is what old forums always did: put the date of account creation and number of posts and comments next to the commenter name. There is a plague of month-old accounts spreading rumors and bad faith arguments.

Twitter does tell you both of those stats, though you have to click to the account profile.

While looking at the number of tweets per month could be a good way to ID a bot account, the problem is that the people who use bots for spam or other purpose can just create more accounts and tweet through them less frequently.

If Twitter really wanted to do something about bots, it could try and catch them at the beginning by looking for telltale markers like many accounts being created by the same IP address in a short interval of time. They have tests like this already, but something tells me that part of the problem is that bots have been good for business and “growing the ecosystem” so they turned a blind eye to them.

Clicking to the account profile on dozens or hundreds of comments is not feasible. And as you’ve noted, putting this info next to profile names would greatly increase the effort required to spam.

Is it possible that Captain Obvious here has never run across the twitter account of pretty much any politician?!? They do all that, plus some pandering thrown in for good measure.

I also noted a “trial balloon” floated there for more biometric identification methods to be employed in the ‘infosphere.’

“…some fake accounts use AI generated faces as their profiles.” This begs the question of what to do about it. Obviously, for authoritarian minded Terran humans, increased use of biometric data is in order.

Ye Panopticon awaiteth ‘With Folded Hands.’

Jack Williamson saw our future away back in 1947.

“With Folded Hands.”: https://en.wikipedia.org/wiki/With_Folded_Hands

Yeah, and some of this is snuck in when a vendor first says that 2FA is “recommended” (e.g., Apple now nags you about 2FA every time you install a software update to iOS), and then it turns out 2FA involves biometric identification (like a fingerprint via your cellphone), and then at some point later 2FA becomes required.

What is a bot? Keep it simple, stoopid. A bot is an automated account rather than a human being. “Bot” as in robot.

The guys who wrote this paper estimate 5% of Twitter accounts are bots. They are wrong. Try 10 times that amount. Over half the accounts on the internet are bots. We broke the halfway point 5 years ago.

https://www.theatlantic.com/technology/archive/2017/01/bots-bots-bots/515043/

The truth is the internet is eat up with bots that artifically click on views and likes and tweets and so on artificially inflating the metrics used to count how popular any given thing is on the internet, including Twitter.

It’s not that Donald Trump was the only fake thing on Twitter. It’s that most of what is on social media is fake and the traffic to websites and so on is fake.

As soon as clicks and views and user demographics were monetized, the advertisers and data miners and other scammers found ways to make money. This is more bigger and better done by bots – duh!

hard agree. For just one possible explanation of why this would be done at the corporate level and continually overlooked in product implementation, a lot of startup funding and valuation is done off of metrics of something called “Monthly Active Users” (MAU) with the thinking that higher MAU = higher usage = more likelihood of turning a profit. At every stage everyone is incentivized to juice the numbers and not look too closely at the difference between distinct human and bot so that the payoff is bigger for everyone.

Elon is shocked, shocked to find bots on Twitter.

Twitter would appear a lot less engaged without bots, and everyone who has checked out high-profile accounts like Glenn Greenwald’s knows that.

As a devotee of logic, I thought this short paragraph from the article should be memorialized:

” Based on our knowledge and experience, we believe that estimating the percentage of bots on Twitter has become a very difficult task, and debating the accuracy of the estimate might be missing the point. Here is why.

Thank you, NC, for giving me yet another reason why I’m glad I left Twitter.

Yves’s intro is on point.

Who cares what a bot is? If I were purchasing Twitter and they represented they had X number of users upon which a good faith offer was made, then I’d certainly be interested in knowing ‘an’ answer regarding bots vs humans. A perfect answer? This may be impossible as has been observed – but – filings have been made, representations made, and if bots materially affects these numbers, they affect the deal. It’s entirely reasonable in my view to say, hol’ up! Musk is/has doing/done that and now we see if because the numbers are wonky if the deal can be adjusted. Lesson for the next vendor of something? You better be telling the truth! Any takers on whether Facebook’s unique users numbers in the BILLIONS are legit?

Basically, this post is “We created a Bot-O-Meter that cannot measure bots. Here is why what we say is not garbage…”

Just thought about it. Maybe the reason that there is a desire to keep Twitter bots is their utility as a ‘nudge’ tool by certain groups. They can be used to ‘nudge’ people and groups into a certain direction or cause. So it all comes down to Nudge Theory-

‘Nudge theory is a concept in behavioral economics, political theory, and behavioral sciences that proposes positive reinforcement and indirect suggestions as ways to influence the behavior and decision-making of groups or individuals. Nudging contrasts with other ways to achieve compliance, such as education, legislation or enforcement.’

https://en.wikipedia.org/wiki/Nudge_theory

Easy two step process to stop the bots.

1) Stop reporting the number of followers. This should not be permitted as public information.

2) Close all accounts to replies by default, with some basic security options to grant access either in blocks and/or individually.

Another alternative is to do something like Substack has done; introduce an option that permits only monthly subscribers to post replies.

Substack and Twitter are a very natural fit. One drives the other. Musk may be considering Substack as his next purchase. Wouldn’t be surprised if he’s already working on it.

No doubt this would change Twitter dramatically, but it would go a long way to remove the detritus. Let their competitors bear this useless cost, but I suspect the bot activity would gradually disappear entirely.

Yves, re: your intro, download bots like @threadreaderapp and @this_vid facilitate local archiving and later reposting, especially in these information-controlled times where a bookmark or a tweet someone doesn’t like might not be there tomorrow. I think those are very good, valuable bots for those who elect not to use local software like youtube-dl to do the same.