Jerri-Lynn here. Grab a cup of coffee and make time to read this devastating analysis of what went wrong at Boeing..

By Gregory Tavis, a writer, a software executive, a pilot, and an aircraft owner who has logged more than 2,000 hours of flying time, ranging from gliders to a Boeing 757 (as a full-motion simulator).

[NB: This is a companion to How the Boeing 737 Max Disaster looks to a Software Developer which appeared in IEEE Spectrum in the spring of 2019]

In 2019, two brand-new Boeing 737 MAX aircraft crashed within half a year of one another, killing all aboard both aircraft. Because of the very few numbers of flights of the new model, those two crashes gave the 737 MAX a fatal crash rate of 3.08 fatal crashes per million flights.

To put that number in perspective, the rest of the 737 family has a rate of 0.23 and the 737’s main competitor, the A320 family, has a rate of 0.08. The A320neo, the latest version of the A320 and the airplane against which the 737 MAX was designed to compete, has a rate of 0.0 (z(zero). (airsafe.com)

Both crashes were traced to a software system unique to the airplane, called the Maneuvering Characteristics Augmentation System, or MCAS. The 737 MAX used new and larger engines placed further forward of the wing (for ground clearance). And those engines created an aerodynamic instability that MCAS was intended to correct.

Soon after the crashes Boeing revealed that MCAS relied on something called an “angle of attack” (AOA) sensor to tell the software if that instability needed to be corrected. Angle of attack sensors are relatively unreliable devices on the outside of the airplane.

Because of this unreliability, it is common to mount several AOA sensors on the aircraft. For example: the A320neo has three. While the 737 MAX has two AOA sensors, the MCAS software used only one of them at a time. Subsequently, the MCAS software was triggered by bad data from the single AOA sensor.

This then rendered the aircraft uncontrollable with the loss of 346 lives.

The Fix Is In

Boeing proposed a number of fixes to the problem. The most important: having MCAS use both of the 737 MAX’s sensors, instead of just one. If the two sensors disagreed, MCAS would not trigger.

Using two sensors, instead of just one, is such an obvious improvement that it is difficult to understand why they did not just do so in the first place. Nevertheless, Boeing pledged to change the software, to use both, just over half a year ago.

It still has yet to demonstrate a viable fix.

Boeing’s inability to demonstrate a fix for its troubled MCAS system is a demonstration of just how deep the problem is. It illustrates how desperate Boeing is to keep alive a software solution to the aircraft’s instability issues.

Most chillingly, it illustrates just how inadequate such a solution is to the issue.

Most sadly, it is a symbol of the collapse of institutions in the United States. We were once considered the world’s gold standard in everything from education to manufacturing to effective and productive public-sector regulation. That is all going down the drain, flushed by a belief in things that just are not true.

Trying to Make Sense of It

Since I first learned of the nature of MCAS and its deficiencies, I’ve struggled to come up with a theory of why? How could something as manifestly deadly, and incompetent, as MCAS ever see the light of day within a company like Boeing?

MCAS is dumb as a bag of hammers, as incomplete as Beethoven’s 10th symphony, and as deadly as an abattoir. Its risk to the company was total. How, then, did it ever see the light of day?

I believe the answer lies in the nature of leadership of the Boeing organization. And the effect on the company’s culture that leadership has.

Charles Pezeshki, has a theory of empathy in the organization. When I use the term “empathy” in this article, it is Pezeshki’s term and not the more general vernacular understanding. Specifically, my understanding of empathy in this context is a sense of trust between all individuals in an organization that arises from transparency. That transparency, in turn, enables an understanding of both shared success as well as shared risk.

What that means to an organization like Boeing is:

- Empathy rules relationships

- Safety is the foundation of empathy

- Empathy catalyzes synergy

- Empathy handles complexity well

- Empathy governs tool (and process) selection

- Different empathy levels are tied to different values

And what it means within an organization is that if there is an erosion of empathy, costs go up.

Empathy is important not only within an organization but also between organizations. When empathy is destroyed between organizations, such as has happened between Boeing and its subcontractors and suppliers, there is a quantifiable cost that can be attributed. While this cost is similar in concept to the notion of corporate goodwill, it is not the same.

Another calculus provides us with a way to understand that cost. Let’s say a subcontractor, such as Spirit Aerosystems, supplies Boeing with finished 737 fuselages at an agreed-upon price of $10 million dollars per fuselage. But that is the price that Boeing pays only if there is total empathy, total trust, between the two companies.

If the empathy relationship has eroded, however, Boeing’s actual price goes up. If Spirit does not trust that Boeing will not break contracts between them in the future, Spirit will start making contingency plans – such as making fuselages for Boeing’s competitor, Airbus.

Like a suspicious spouse, they will begin to shift resources away from Boeing. They’ll start to “look around” for another, more faithful, partner. They flirt with Airbus and begin to retool their factories internally in the hopes of attracting that new partner. Their machinery will start to make each 737 fuselage a little less well for Boeing as the tools become less precise for Boeing and more precise for Airbus.

Their workers, likewise, will shift their future attention from the company that they perceive as yesterday’s news and towards the company with which they hope to form a better relationship. And that will affect the quality of the work that Spirit does for Boeing (down) and Airbus (up).

That also costs money. And that cost is reflected in what Boeing will need to do to re-work defective fuselages from Spirit and in its future negotiations with Spirit.

Redundancy

In aviation, redundancy is everything. One reason is to guard against failure, such as the second engine on a twin-engine airplane. If one fails, the other is there to bring the plane down to an uneventful landing.

Less obvious than outright failure is the utility of redundancy in conflict resolution. A favorite expression of mine is: “A person with one watch always knows what time it is. A person with two watches is never sure.” Meaning if there’s only one source of truth, the truth is known. If there are two sources of truth and they disagree about that truth there is only uncertainty and chaos.

The straightforward solution to that is triple or more redundancy. With three watches it is easy to vote the wrong watch out. With five, even more so. This engineering principle derives from larger social truths and is embedded in institutions from jury pools to straw polls.

Physiologically, human beings cannot tell which way is up and which way is down unless they can see the horizon. The human inner ear, our first source of such information, cannot differentiate gravity from acceleration. The ear fails in its duty whenever the human to which it is attached is inside a moving vehicle, such as an airplane.

Then only reliable indication of where up and down reside is the horizon. Pilots flying planes can easily keep the plane level so long as they can see the ground outside. Once they cannot, such as when the plane is in a cloud, they must resort to using technology to “keep the greasy side down” (the greasy side being the underside of any airplane).

That technology is known as an “artificial horizon.” In the early days, pilots synthesized the information from multiple instruments into a mental artificial horizon. Later a device was developed that presented the artificial horizon in a single instrument, greatly reducing a pilot’s mental workload.

But that device, as is everything in an airplane, was prone to failure. Pilots were taught to continue to use other instruments to cross-check the validity of the artificial horizon. Or, if the pocketbook allowed, to install multiple artificial horizons in the aircraft.

What is important is that the artificial horizon information was so critical to safety that there was never a single point of reference nor even two. There were always multiples so that there was always sufficient information for the pilot to discern the truth from multiple sources — some of which could be lying.

Information Takers and Information Givers

The machinery in an aircraft can be roughly divided into two classes: Information takers and information givers. The first class is that machinery that manages the aircraft’s energy, such as the engines or the control surfaces.

They are the aircraft’s machine working class.

The second class of machinery are the information givers. The information givers are responsible for reporting everything from the benign (are the bathrooms in use?) to the critical (what is our altitude? where is the horizon?).

They are the aircraft’s machine eyes and ears.

Redundancy Done Right (for Its Time)

All of the ideas and technology embodied in the Boeing 737 were laid down in the 1960s. This ran from what kind of engines, to pressurization, to the approach to the needs of redundancy.

And the redundancy approach was simple: two of everything.

Laying that redundancy out in the cockpit became straight-forward. One set of information-givers, such as airspeed, altitude, horizon on the pilot’s side.

And another set of identical information-givers on the co-pilot’s side. That way any failure on one side could be resolved by the pilots, together, agreeing that the other side was the side to watch.

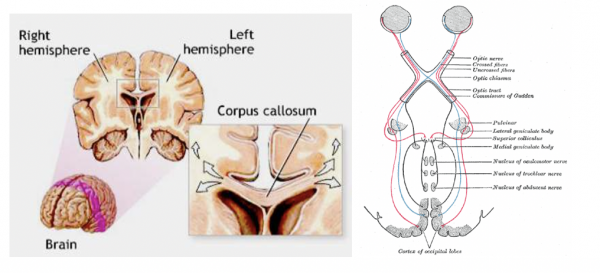

Origin of Consciousness in the Bicameral Mind

Visualize, if you will, the cockpit of a Boeing 737 as a human brain. There is a left (pilot) side, full of instrumentation (information givers, sensors such as airspeed and angle of attack), a couple pilots and an autopilot.

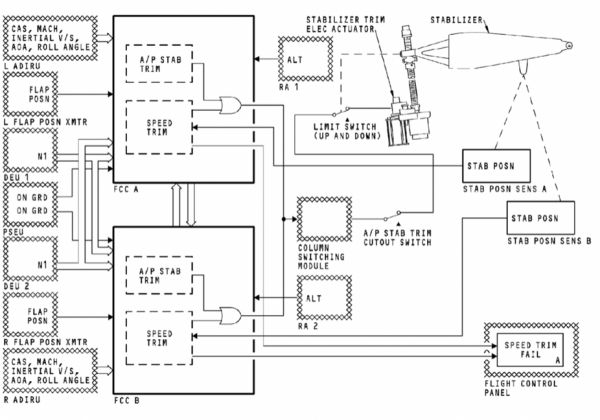

And there is a right (co-pilot) side, with the exact same things. In the picture above items encircled by same-colored ovals are duplicates of one another. For instance, airspeed and vertical speed are denoted by purple ovals. The purple ovals on the left (pilot’s) side get their information from sensors mounted on the outside of the plane, on the left side. The ones on the right, well the right side.

And, like a human brain, there is a corpus callosum connecting those two sides. That connection, however, is limited to the verbal and other communication that the human pilots make between themselves.

In the human brain, the right brain can process the information coming from the left-eyeball. The left brain can process the information coming from the right-eyeball.

In the 737, however, the machinery on the co-pilot’s side is not privy to the information coming from the pilot’s side (and vice-versa). The machines on one side are alienated from the machines on the other side.

In the 737 it is up to the humans to intermediate. The human pilots are the 737’s corpus callosum.

The 737 Autopilot Origin Story

The 737 needed an autopilot, of course, and its development was straight forward.

Autopilots in those days were crude and simple electromechanical devices, full of hydraulic lines, electric relays and rudimentary analog integration engines. They did little more than keep the wings level, hold altitude and track a particular course.

Obtaining the necessary redundancy in the autopilot system was as simple as having two of them. One on the pilot’s side, one on the co-pilot’s side. The autopilot on the pilot’s side would get its information from the same information-givers giving the pilot herself information.

The autopilot on the co-pilot’s side would get its information from the same information-givers giving the co-pilot his information.

And only one auto-pilot would function at a time. When flying on auto-pilot, it was either the pilot’s or the co-pilots auto-pilot that was enabled. Never both.

And in those simple, straightforward, days of Camelot, it worked remarkably well.

In the picture above you can see the column labelled “A/P Engage.” This selects which of the two autopilots is in use, A or B. The A autopilot gets its information from the pilot’s side. The B from the co-pilot. If you select the B autopilot when the A is engaged, the A autopilot will disengage (and vice-versa).

What this means is that the pilot’s autopilot does not see the co-pilot’s airspeed. And the co-pilot’s autopilot does not see the pilot’s airspeed. Or any of the other information-givers, such as angle of attack.

Once again, the human pilots must act as the 737s corpus callosum.

The Fossil Record

JFK was president when the 737s DNA, its mechanical, electronic and physical architecture, were cast in amber. And that casting locked into the airplane’s fossil record two immutable objects. One, the airplane sat close, really close, to the ground (to make loading passengers and luggage easier at unimproved airports). And, two, the divided and alienated nature of its bicameral automation bureaucracy.

These were things that no amount of evolutionary development could change.

Rise of the Machines

Of all the -wares (hardware, software, humanware) in a modern airplane the least reliable and thus the most liability-attracting is the humanware. Boeing, ironically, estimates that eighty-percent of all commercial airline accidents are due to so-called “pilot error.” I am sure that if Boeing’s communication department could go back in time, they’d like to revise that to 100%.

With that in mind, it’s not hard to understand why virtually every economic force at work in the aviation industry has on its agenda at least one bullet-point addressed to getting rid of the human element. Airplane manufacturers, airlines, everyone would like as much as possible to get rid of the pilots up front. Not because pilots themselves cost much (their salaries are a miniscule portion of operating an airline) but because they attract so much liability.

The best way to get rid of the liability of pilot error is to simply get rid of the pilots.

Emergence of the Machine Bureaucracy

In aviation’s early days, there was no machine bureaucracy. Pilots were responsible for processing the information from the givers and turning that into commands for the takers. Stall warning (information giver) activated? Push the airplane’s nose down and increase power (commands to the information takers).

Soon, however, the utility of allowing machines to perform some of the pilot’s tasks became obvious. This was originally sold as a way to ease the pilot’s tactical workload, to free the pilots’ hands and minds so that they could better concentrate on strategic issues – such as the weather ahead.

It did not take long to realize that the equation was backward. The machines worked well when they were subservient to the pilots. But they would work even better when they were superior to the pilots.

And now there was a third class of machinery in the plane. The machine bureaucrat.

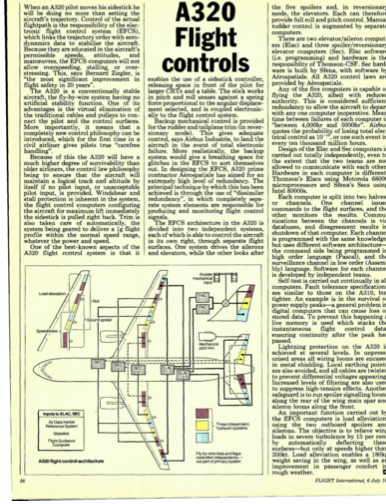

The Airbus consortium, long a leader in advancing the technological sophistication of aviation (they succeeded with Concorde where Boeing had utterly failed with their SST, for example), realized this. And in the late 1970s embarked on a program to create the first “fly by wire” aircraft, the A320.

In a “fly by wire” aircraft, software stands between man and machine. Specifically, the flight controls that pilots hold in their hands are no longer connected directly to the airplane’s information takers, such as the control surfaces and engines. Instead the flight controls become yet another set of information-givers.

Automation Done right

When Airbus floated the idea of a “fly-by-wire” aircraft, the A320, it knew it had to do it right. It was a pioneer in a technology that would need to prove itself to a skeptical industry, not to mention public. It had to build trust that it could make something that was safe.

And that the way to do it right was with a maximum amount of empathy. Toward that end Airbus was extremely transparent about exactly how the A320’s automation would work. They told the public (this was in the mid 1980s) that the automation system would employ an army of redundant sensors, like the kind implicated in the MCAS crash.

They told the public that the system would employ an army of redundant computers, each able to take over the tasks of any computer gone rogue, or down. And they told the public that the system would use an army of disparate human groups. The system’s design was laid down on paper and disparate groups from disparate companies had to implement identical solutions to the same designs.

Everybody worked in an atmosphere of total transparency.

That way if any of the implementing companies suffered from an inadequacy of empathy, if any of them tried to cut a corner or didn’t understand what their jobs were, it would be countered by the results from the companies that did.

And what they produced were a set of machine bureaucrats. Taking information from the machine eyes and ears – airspeed, angle of attack, altitude. And as well as the pilots, both human and automatic.

And they evaluate that information – its quality, its reliability and its probity on an equal level. Which means with an equal amount of skepticism.

The human pilots were demoted out of the bureaucracy and into the role of information-givers. Alongside things like the airspeed sensors, the angle of attack sensors, and all the other sensors the pilots were only there to serve as additional eyes and ears for the machines.

Next step, bathroom monitor.

The airlines saw the writing on the wall and were delighted. Boeing, caught with its pants around its ankles, embarked on a huge anti-automation campaign – even as it struggled to adapt other aspects of the A320 technology, such as its huge CFM56 engines, to Boeing’s already-old 737 airframe.

Boeing’s strategy worked well for quite some time. By retrofitting the A320s engines to the 737, they were able to match the A320s fuel economics. And with a vigorous anti-automation campaign, aided by pilots’ unions and a public fearful of machine control, kept the 737 sales rolling.

It All Comes Down to Money

Wall Street loves technology that involves little or no capital investment. Think Uber. And why it hates old-line manufacturing with its expensive factories and machinery.

And people. Which is what Boeing’s managers – its board of directors – understood when they embarked on an ambitious program to re-make the company. Re-make the company away from its old-line industrial roots, which Wall Street abhorred, and more like something along the lines of an Apple Computer.

The Liquidation of Capital

There were a lot of components to that transformation. Including firing all the old-line engineers in unionized Washington State. And replacing them with a cadre of unskilled workers, such as those putting together the 787 “Dreamliner” in antebellum South Carolina.

Putting together, not making, because another relentless part of the transformation was liquidation of Boeing’s capital plant. Like another once-giant aviation company, Curtiss Wright, Boeing’s managers had drunk the Wall Street koolaid and believed, with no empirical evidence, that the best way of making money by making things was not to make things at all.

Think of it as aviation’s version of Mel Brook’s black comedy The Producers (The Producers was about making money, by losing money).

A key Wall Street shibboleth along those lines being something known as “Return on Net Assets,” or RONA. RONA says “Making something with something is expensive. So make something, but make it with nothing.”

Think of it as “Springtime for Hitler” (“Springtime for Hitler” was the title of a show within The Producer sassumed to be so awful that it was guaranteed to lose money, and thus make money).

Springtime for Hitler, in the Wall Street world, means that US manufacturing companies stop making anything themselves. Instead everything they make, they get other people to make for them. Using exploited labor, producing inferior product greased on the wheels of distrust, fear and an utter lack of shared mission or shared sacrifice.

That means, for example, that the 787s being assembled in South Carolina are being put together by people whose last job was working the fry pit at the local burger joint using parts made by equally, and intentionally, marginalized people from half a world away.

What could go wrong? Well, Boeing itself only makes about ten percent of each 737. The rest, ninety percent, is made by its subcontractors and suppliers. That is a situation in which Boeing is extremely exposed to any breakdown in empathy between itself and its suppliers, yet that risk is not factored into its stock price by Wall Street.

That’s what could go wrong.

Software Is Killing Us

As a forty-year veteran of the software development industry and a person responsible for directing teams that generated millions of lines of computer code, I will tell you something wonderful about the industry.

Anything you can do by building hardware – by casting metal, sawing wood, tightening fasteners or running hoses – you can do faster, cheaper, and with less organizational heartache with software. And you can do it with far fewer prying eyes, scrutiny or oversight.

And the icing on the cake: if you screw it up, you can pass along (“externalize,” as the economists say) the costs of your mistakes to your customers. Or in this case, the flying public.

The Rockwell-Collins EDFCS-730

As mentioned earlier in this article, the original 737 autopilots were a collection of electromechanical controls made out of metal, hydraulic fluid, relays, etc. And there were two of them, each connected to either the pilot’s or co-pilot’s information-givers.

Over time more and more of the electromechanical functions of the autopilots were replaced/and-or supplanted by digital components. In the most current autopilot, called the EDFCS-730, that supplanting is total. The EDFCS-730 is “fully digital” – meaning no more metal, hydraulic fluid, etc. Just a computer, through and through.

And, just like the original autopilots, there are only two EDFCS-730s (the A320neo has the equivalent of five and they are far more comprehensive). And each one can only see the information givers on their respective sides (all of the computers in the A320neo can see all of the givers). Remember, that architecture was cast in amber.

The EDFCS-730 is “Patient Zero” of the MCAS story. It offered Boeing an enormous set of opportunities. First, it was far cheaper on a lifecycle basis than the old units it replaced. Second, it was trivial to re-configure the autopilot when new functionality was needed – such as a new model of 737.

Third, its operating laws were embedded in software – not hardware. That meant that changes could be made quickly, cheaply and with little or no oversight or scrutiny. One of the aspects of software development in aviation is that there are far fewer standards, practices, or requirements for making software in aviation than there are for making hardware.

A function that would draw an army of auditors, regulators and overseers in hardware gets by with virtually no oversight if done in software.

There is a USB port in the 737 cockpit for updating the EDFCS-730 software. Want to update it with new software? You need nothing more than a USB keystick.

It’s not hard to see why software has become an unbelievably attractive “manufacturing” option.

Longitudinal Stability

Late in the 737 MAX’s development, after actual test flying began, it became apparent that there was a problem with the airframe’s longitudinal stability. We do not know how bad is that problem nor do we know its exact nature. But we know it exists because if it didn’t, Boeing would not have felt the need to implement MCAS.

MCAS is implemented (“lives in”) the EDFCS-730. It pushes the 737 MAX’s nose down when the system believes that the airplane’s angle of attack is too high. For more on that process, see https://spectrum.ieee.org/aerospace/aviation/how-the-boeing-737-max-disaster-looks-to-a-software-developer

I believe that Boeing anticipated the longitudinal stability issue arising from the MAX’s larger engines and their placement. In the late 1970s Boeing had encountered something similar when fitting the CFM56 engines to the 737 “Classic” series. There it countered the issue with a set of aerodynamic tweaks to the airframe, including large strakes affixed to the engine cowls which are readily visible to any passenger sitting in a window seat over or just in front of the wing.

When the issue of longitudinal stability arising from engine size and placement arose again with the larger-still CFM LEAP engines on the 737 MAX, Boeing had a tool at its disposal that it had not had with previous generations of 737.

And that tool was the EDFCS-730 autopilot.

Boeing had a choice: correct the stability problems in the traditional manner (meaning expensive changes to the airframe) or quickly shove some more software, by shoving a USB keystick, into the EDFCS-730 to make the problem go away.

One little problem…

In the olden days all of the sensors outside the plane that gathered “air data” were connected directly to their respective cockpit instruments. The pitot tubes had plumbing connecting them to the air speed instruments, the static ports connected directly to the vertical speed and altimeter instruments, etc.

This turned into a bit of a plumbing nightmare as well as made it difficult to share that data with a larger number of instruments and devices. The solution was a box called an Air Data Inertial Reference Unit (“ADIRU” in the diagram, below), a kind of Grand Central Station of air data. The “IRU” part of it refers to the plane’s inertial reference platform, which provides the plane’s location and attitude (pointed up, down, sideways, etc.)

ADIRUs were not common on commercial airliners (I can think of none, actually) when the 737 was first certified and, indeed, the first 737s did not have any. But as newer models emerged and the technology became commonplace, the 737 gained ADIRUs as well. However, it gained them in a way that did not fundamentally change the bicameral nature of its information givers.

The A320 never had the same bicameral architecture. The A320 was born with ADIRUs and it has not two but three (see the “two watches” problem, above). In the A320 all three ADIRUs are available to all five Flight Control Computers (FCCs), all the time – making it relatively easy for any of the FCCs to read from any of the three ADIRUs and determine if one of the ADIRUs is not telling the truth.

One more small note, before we go on. The industry uses the term “Flight Control Computer,” (FCC). On all aircraft that I can think of, the FCCs are the computers used to intermediate between the pilot’s controls and the airplane’s control surfaces. They are where the software that stands between man and machine “lives.”

Airbus, for example, makes an express differentiation between the FCCs and the autopilot computers. They are two separate functions.

Boeing chooses to call the autopilot computers (EDFCS-730s) “Flight Control Computers.” In the 737, which is not a fly by wire aircraft, there is no differentiation between FCC and autopilot. They are one and the same.

I suspect this has more to do with marketing than anything but readers should take away from it one important thing: in a real automation architecture flight control functions and autopilot functions are distinct. In the 737 MAX Boeing has attempted to gain the marketing advantage of having a “Flight Control Computer” architecture without having to do the real work required of implementing a robust FCC architecture. Instead, they are cramming more and more automation functions into boxes never designed for such: the autopilot boxes.

The result is Frankenplane.

Above is a diagram of the automation architecture of the 737 NG and 737 MAX. The two components labelled “FCC A” (Flight Control Computer) and “FCC B” are the EDFCS-730s.

Two things stand out in the diagram:

- There appears to be a link (a “corpus callosum”) between FCC A and FCC B (vertical arrows between them)

- The left Angle of Attack (AOA) sensor (contained in “L ADIRU”) is connected to FCC A & the right Angle of Attack (AOA) sensor (contained in “R ADIRU”) is connected to FCC B

At this point my readers may be justifiably angry with me. After all, I’ve been going on and on about the bicameral nature of the 737 architecture and the lack of an electronic corpus callosum between the two “flight control computers” (autopilots). Yet it is clearly there in the diagram, above. I feel your pain.

In my defense, I ask that you remember one thing: we know that the initial MCAS implementation did not use both of the 737s angle of attack sensors. Despite the link between the FCCs that should have allowed it to do so. It should have been relatively easy for the FCC in charge of a given flight, running MCAS, to ask the FCC not in charge to pass along the AOA information over the link in the diagram.

Why did they not do this? Forensically, I can think of two possible reasons:

- It was just “too hard.”The software in the EDFCS-730 is too brittle and crufty (these are software technical terms, believe me), there is something about the nature of the link (it’s a 150 baud serial link, for example (note for the pedants, I am not saying it is)), you have to “wake up” the standby FCC, etc.

- Boeing deliberately did not want to use both AOA sensors because, as I said at the beginning, “a man with one AOA sensor knows what the angle of attack is, a man with two AOA sensors is never sure.”e. if Boeing used two sensors then it would have had to deal with the problem of what to do if they disagreed. And that would have meant training which would have violated ship the airplane.

I tend to tilt towards #1. I think it’s just really, really hard to do so and I think that the “boot up” problems (see end of this article) point to exactly that. If so, that is yet another damning reason why the FAA and no one should ever certify as safe MCAS as a solution to the aircraft’s longitudinal stability problem.

That said, it’s never “either/or.” The answer could be “both #1 and #2”

Automation Done Wrong

I have spoken to individuals at all of the companies involved and have yet to find anyone at Rockwell Collins (now Collins Aerospace) who can direct me to the individuals tasked with implementing the MCAS software. Collins is, predictably, extremely reluctant to take ownership of either the EDFCS-730 or its software and has predictably kept its mouth very shut for over a year.

I have been assured repeatedly that the internal controls within Collins would never have allowed software of such low quality to go out the door and that none of their other autopilot products share much, if any commonality, with the EDFCS-730 (which exist for and only for the 737 NG and 737 MAX aircraft).

That, together with off the record communications, leads me to believe that Boeing itself is responsible for the EDFCS-730 software. Most important, for the MCAS component. The responsibility for creating MCAS appears to have been farmed out to a low-level developer with little or no knowledge of larger issues regarding aviation software development, redundancy, information takers, information givers, or machine bureaucracy.

And I believe this is deliberate. Because a more experienced developer, of the kind shown the door by the thousands in the early 2000s, would have immediately raised concerns about the appropriateness of using the EDFCS-730, a glorified autopilot, for the MCAS function – a flight control function.

They would have immediately understood that the lack of a robust electronic corpus callosum between the left and right autopilots made impossible the use of both angle of attack sensors in MCAS’ automatic deliberations.

They would have pointed out that the software needed to realize that an angle of attack that goes from the low teens to over seventy degrees, in an instant, is structurally and aerodynamically impossible.

And not to point the nose at the ground when it does. Because the data, not the airplane, is wrong.

And if they had, the families and friends of nearly four hundred dead would be spared their bottomless grief.

Instead, Wall Street’s empathy discount sealed their fate.

Quick, Dirty and Deadly

The result is, as they say, history. Wall Street had stripped Boeing of a leadership cadre of any intrinsic business acumen. And its leadership had no skills beyond extraordinary skills of intimidation through a mechanism of implied and explicit threats.

Empathy has no purchase in such an environment. The collapse of trust relationships between individuals within the company and, more important, between the company and its suppliers fertilized the catastrophe that now engulfs the enterprise.

From high in the company came a dictat: ship the airplane. Without empathy, there was no ability to hear cautions about the method chosen by which to ship (low-quality software).

The Pathology of Boeing’s Demise

Much has already been written about the effect of McDonnell Douglas’ takeover of Boeing. John Newhouse’s Boeing vs. Airbus is the definitive text in the matter with L.J. Hart-Smith’s “Out-sourced profits- The cornerstone of successful subcontracting” being the devastating academic adjunct.

Recently Marshall Auerback and Maureen Tkacik have covered the subject comprehensively, leaving no doubt about our society’s predilection for rewarding elite incompetence handsomely.

Alec MacGillis’ “The Case Against Boeing” ( https://www.newyorker.com/magazine/2019/11/18/the-case-against-boeing ) lays out the human cost of Wall Street’s murderous rampage in a manner that should leave claw marks on the chair of anyone reading it.

Charles Pezeshki’s “More Boeing Blues” ( https://empathy.guru/2016/05/22/more-boeing-blues-or-whats-the-long-game-of-moving-the-bosses-away-from-the-people/ ) is arresting in its prescience.

Boeing’s PR machine has repeatedly lied about the origin and nature of MCAS. It has tried to imply that 737 MCAS is just a derivation of the MCAS system in the KC-46. It is not.

It has tried to blame the delays in re-certification on everything from “cosmic rays” (a problem the rest of the industry solved when Eisenhower was president) to increased diligence (up is the only direction from zero). Most of the press has bought this nonsense, hook line and sinker.

More nauseatingly, it promotes what I will call the “brown pilot theory.” Namely, that it is pilot skill, not Wall Street malevolence, that is responsible for the dead. In service of that theory it has enlisted aviation luminary (and a personal hero-no-more of mine) William Langewiesche.

For the best response to that, please see Elan Head’s “The limits of William Langewiesche’s ‘airmanship’” ( https://medium.com/@elanhead/the-limits-of-william-langewiesches-airmanship-52546f20ec9a )

Those individuals “get it.” Missing here are accurate pontifications from much of the aviation press, the aviation consultancies or financial advisory firms. All of whom have presented to the public a collective face of “this is interesting, and newsworthy, but soon the status quo will be restored.”

A Well, Poisoned

Boeing’s oft-issued eager and anticipatory restatements of 737 MAX recertification together with its utter failure to actually recertify the aircraft invite questions as to what is actually going on. It is now over a year since the first crash and coming up on the anniversary of the second.

Yet time stands still.

What was obvious, months ago, was that the software comprising MCAS was developed in a state of corporate panic and hurry. More important, it was developed with no oversight and no direction other than to produce it, get it out the door, and make the longitudinal problem go away as quickly, cheaply, and silently as a software solution would allow.

What became clear to me, subsequently, was that all of the software in the EDFCS-730 was similarly developed. And when the disinfectant of sunlight shined on the entire EDFCS-730 software, going back decades, that – as my late wife’s father would say – the entertainment value would be “zero.”

The FAA was caught with its hand in the cookie jar. The FAA’s loathsome Ali Bahrami, nominally in charge of aviation safety, looked the other way as Boeing fielded change after deadly change to the 737 with nary a twitter from the agency whose one job was to protect the public. In the hope that a door revolving picks all for its bounty.

Collapse

Recent headlines speak in vague terms about Boeing’s inability to get the two autopilots communicating on “boot up.” Forensically, what that means is that Boeing has made an attempt to create a functional electronic corpus collosum between the two, so that the one in charge can access the sensors of the one not in charge (see “One little problem…,” above).

And it has failed in that attempt.

Which, if you understand where Boeing the company is now, is not at all surprising. Not surprising, either, is Boeing’s recent revelation that re-certification of the 737 MAX is pushed back to “mid-year” 2020. Applying a healthy function to Boeing’s public relations prognostications that is accurately translated as “never.”

For it was never realistic to believe that a blindered, incompetent, empathy-desert like Boeing, which had killed nearly four hundred already, was able to learn from, much less fix, its mistakes.

This was driven infuriatingly home with today’s quotes from new-CEO David Calhoun. As the Seattle Times reported, Calhoun’s position is:

“I don’t think culture contributed to that miss,” he said. Calhoun said he has spoken directly to the engineers who designed MCAS and that “they thought they were doing exactly the right thing, based on the experience they’ve had.””

This is as impossible as it is Orwellian. It shows that Boeing’s leadership is unwilling (and probably unaware) of what the root issues are. MCAS not just bad engineering.

It was the inevitable result of the cutting of the sinews of empathy, sinews necessary for any corporation to stand on its own two feet. Boeing is not capable of standing any more and Calhoun’s statements are the proof.

Solid tested architectures destroyed because software & ideology got regulation seriously wrong. Same story across every silo of our world.

if only we had a word that combined “software” and “theology. Then maybe we could describe Techworld more accurately.

the word is “Apple”.

“Agile”?

That’s a marketing slogan, I’m talking about the belief system

Codeology – the belief that a better line of code thought up by a fallible human can overcome all physical or sociological obstacles.

Also known as:

“Code will fix it!”

Not sure this was a technology problem, it was totally in the control of management, they chose who was to do the work (they picked the consultant who did the work, they set the rules for quality, they set the priorities, just ship it. Guessing they were blind to the downside, just how they could reduce costs). Now one could say that its a political problem (aka ideology) as the company went political leaders wanting help to control cost for the company, saying that they would have more jobs (which was never going to happen) if the FAA was told to ‘outsource’ that safety thing. Now this might be because of that Douglass purchase(they had a history of not really considering it at all). Or it could be that wall street thing, as they are so short sighted (that quarterly announcement, but short termism has lead to enormous costs. Does make one wonder if any other company has noted that ah not till it costs them billions and billions. And maybe not even then

Management will accepts 99% probability of a plane staying in the air.

Engineers by training, prefer 99.9% probability of not falling out of the sky. But they rarely get the final say.

It’s the Ford Pinto problem all over again.

“ The safety record of the car in terms of fire was average or slightly below average for compacts, and all cars respectively. This was considered respectable for a subcompact car. Only when considering the narrow subset of rear-impact, fire fatalities for the car were somewhat worse than the average for subcompact cars. While acknowledging this is an important legal point, Schwartz rejects the portrayal of the car as a firetrap.”

“And replacing them with a cadre of unskilled workers, such as those putting together the 787 “Dreamliner” in antebellum South Carolina.”

They were making airplanes in the 19th Century? You might want to correct that. Brilliant article all the same.

PS, perhaps the author meant that they were still using slave labor.

Yes, that was the point. As a native and current denizen of the South, the sentence stood out to me. And it is largely true in substance. Alas.

As for the loss of empathy, my working life goes back to 1971, and this has been true everywhere I have worked. Outsource or ignore critical functions at every level of the organization, and this will decrease quality and increase costs. Hmm, there seems to be a leak behind that wall…not my problem, I work for someone else. Um, this particular audit function seems to miss several important measures…oh, hell, just let the Third Assistant Vice-President in charge worry about it, when s/he is not on the phone looking to move up to Second Assistant Vice-President somewhere else. You do know, right, that these three extra layers of learning objectives and student outcome “measurements” only take time away from our real responsibilities…and besides that, I have on occasion just let it go to see what happens, and no one seems to notice there is one less time- and energy-consuming, soul-deadening “report” in the “File.”

But, look at the balance sheet (whatever it purports to measure)!

Thank you, KLG.

Yesterday evening’s France 2 news, https://www.france.tv/france-2/journal-20h00/1218489-journal-20h00.html, featured the Airbus factory in Alabama. Although it was suggested that the need to market to American carriers, avoid Trump’s tariffs and take advantage of Boeing’s difficulties were the main reasons for opening there, one did wonder if the cheap and non unionised labour was as important.

@Colonel Smithers

February 14, 2020 at 9:05 am

——-

Thanks, Colonel.

As a resident of Mobile, Alabama, where the Airbus factory is located, I can tell you that the original reason for their choice was to set up an assembly line for the Air Force tanker contract that Boeing now has called the KC-46. The major considerations were one of the longest runways in the US at the former Brookley Air Force Base (now an industrial park) that was closed by Lyndon Johnson in 1964, as well as the Port of Mobile. Lack of unions was most likely also a factor, but not a main one.

When the contract was originally awarded in 2008, Airbus (EADS), with partner Northrup Grumman, was the winner. They had proposed a new airplane designed from the bottom up. Boeing proposed re-purposing the 767.

Boeing protested the award and won the contract after the Air Force rebid it in 2011. I have no doubt they used whatever political influence they had to do so.

Subsequently, Airbus decided to continue construction of the plant here to build passenger airplanes.

Also, subsequently, Boeing has had numerous problems with the KC-46, making the obvious point that politically determined contract awards are often not the best choice.

Thank you, John.

EADS North America chairman Ralph Crosby declined to protest the award saying that Boeing’s bid was “very, very, very aggressive” and carried a high risk of losing money for the company

Thanks John.

Comments like these force me to read every comment section on NC, darn you smart and thoughtful humans! I’m a slow reader so that doesn’t help.

It’s amazing, isn’t it? I don’t think I’ve seen any site with comments which are consistently so thoughtful and interesting.

for 180 degrees opp, see the twitter comments on the WHO daily updates on the coronavirus – Covid-19 https://www.pscp.tv/WHO/1lPKqVQyqEbGb

I thought airbus proposed their transport/tanker, which isn’t new, but had been used by a some air forces already,not that Boeing was putting forth a new plane, it was based on 767.and tariffs didnt exist on the planes, till after 2017. Course they built that plant to sell to carriers since some don’t want to deal with a new supplier.

@d

February 15, 2020 at 11:54 am

——-

Thank you for the correction, d.

I was not precise. Airbus had indeed sold the tanker to others. However, it was designed and built from the beginning as a tanker and not as a re-purposed passenger airplane.

Quite wrong.

The former USAF KC-45 was based on Airbus passenger aircraft A330-200. The point in favor of this aircraft was it didn’t need any mayor modifications except the refueling equipment. The complete fuel load is carried in the regular tanks. The lower cargo hold of the smaller 767 tanker is fuel of fuel tanks.

Up until now all air forces bought Airbus tanker with standard passenger seating for moving troops and cargo is carried on the lower deck.

The Boeing KC-46 is a new 767 version: 767-200 fuselage, 767-300 gear, 767-400 flaps and is called 767-2C. It has a new computerized cockpit (787-style) but just like the 737 no real fly-by-wire. The A330 has fly-by-wire just like A320.

Airbus was already testing the new tanker design for Australian air force while Boeing only had a paper design.

Boeing did win the contest for cheapest tanker. USAF will use the aircraft in 90 % as freighter. The Airbus is able to carry 90 % more pallets.

Even though Boeing offered the cheapest price no risk was associated. No USAF is paying more for flying outdated tankers far longer. Boeing does maintenance work for the old tankers…

One other thing. The book was The Origin of Consciousness in the Breakdown of the Bicameral Mind, by Julian Jaynes. IIRC (it was ~1976) Jaynes’ primary argument dovetails with the argument advanced here. But, if you come so close to appropriating someone else’s terminology, a reference is warranted.

I love(d) this book which I read in my most formative times (it is not new :-) . The general – though not universal (and often contested) – opinion these days is that it has been largely debunked. Nevertheless I would credit it with changing the way my thinking worked – and for the better.

I still have a copy. I believe there is a legitimate non-scanned PDF available. Would still definitely recommend.

https://philosophynow.org/issues/97/How_Old_is_the_Self

That’s exactly what I meant, perhaps not quite as directly to slavery itself but rather to the culture of disempowerment that Boeing sought to exploit.

Thanks

sorry for doubting you! Irony can be hard to read but I appreciate it. Once again, amazing, disturbing article. Thank you!

Well…… South Carolina is right next door to North Carolina, so maybe he was thinking of the Wright Bros. fixing up the flyer in their canvas walled workshop at Kitty Hawk.

BTW: Considering the lingering fondness for Confederate monuments and flags, I think “antebellum” is an all too accurate description of the local workforce. Jobs in a high-skill industry at minimum wages can rightly be called slavery.

No South Carolina is still living in the 19th century.

Have you seen a public toilet on I-95?

Given that (from what I have read recently) Boeing’s liabilities exceed its assets and it will require further cash infusions through bond or share issuance (quite odd, that; a public company that sells shares) to carry on in the near term, perhaps it will become a takeover target. Perhaps MB could ride to the rescue.

I write this in jest, but things are becoming so strange that I suppose anything is possible.

What ever happened to Westinghouse? http://old.post-gazette.com/westinghouse/

Interesting that you mention Westinghouse. Reminds me of the nuclear industry. The reason why we have the nuclear reactors we have today? Because they were fast in generating electricity, though more dangerous. There is an actual design for a far safer nuclear reactor but it takes longer to reach optimum thermal efficiencies. The nuclear industry wanted fast reactors that quickly reached thermal efficiencies.

The nuclear industry’s desires are so entrenched in regulatory bodies that thorium reactor research has to be done overseas.

Another parallel to the Boeing saga of mismanagement, greed, and corporate degeneracy? Goodyear RV tires that led to deaths thanks to tires that Goodyear knew were defective. Yet, Goodyear executives hid that from the NHTSA with secret settlements.

The ultimate justice system for corporations is that cash is used to pay out for justice, instead of people actually being convicted and sent to prison for their actions at their company or corporation. This is the end-game for corporation for remaking our justice system. They have been quite successful.

Boeing is simply a typical example of what is now accepted practice in America.

The US Government even manages to use official secrets laws to stifle lawsuits. Such as the Area 51 workers who were exposed to toxic substances. Or The US military attempting to avoid responsibility for soldiers exposed to toxic substances such as depleted uranium and whatever toxic materials were burned in burn pits in places like Iraq. The most famous case of exposure being Agent Orange from Vietnam. When one looks? Corporations are usually involved in such practices under contract to The US Government.

Boeing is a fine representation of American cultural practice.

I think you mean Firestone tires if this references the Ford Exploder debacle.

That was more a Ford thing, they did spec out the tires, they just speced then to cut costs, nothing else.sounds familiar huh? A company putting customers lives at risks. Who knew that was a bad thing course one might think they would have learned from the pinto, guess not

The Firestone tire/Ford Explorer mess was a different problem. The comment above referenced Goodyear selling what were essentially passenger car tires for RV applications where the vehicle was 10x heavier than the tires were designed for. This led to a bunch of sudden tire failures resulting in some really bad RV crashes and people getting killed. It never attracted the amount of press attention that the Firestone/Explorer problems received.

The reason why we have the nuclear reactors we have today? Because they were available (albeit optimized for use on submarines).

So, where does this lead and what is the timeline? Bankruptcy? Should the company be broken up into smaller pieces? Or is this another “too big to fail” scenario? Is a reform/repair of the company even possible? How would that even happen?

It seems that the solutions needed at Boeing will be the same solutions needed in the rest of the American social/economic system.

I am reminded of *quality* as a philosophical value in “Zen And The Art Of Motorcycle Maintainance” by Robert Pirsig – a potential counterbalance to profit as a value. Ultimately, Boeing’s problems are philosophical/cultural/societal problems. And so are America’s.

The critical quote for me was: “…empathy in this context is a sense of trust between all individuals in an organization that arises from transparency…”

And I keep coming back to *quality*…

Best…H

Now there is a long forgotten book – I’ve often thought Persigs book should be a ‘must read’ for all engineers and managers. I feel like throwing that book at anyone in my office who talks about productivity output measures without ever mentioning quality (they never mention quality).

Did W. Edwards Deming live in vein then?

Well, the modern ideology of management sees quality as a cost and so it gets little support until things go wrong ou output suffers.

I still have a Western Electric Statistical Quality Control Handbook, written at the Chicago Hawthorne Works and which Deming contributed to. It is meant to be the shop-floor bible of quality.

I think, consistent with the denigration of hard-nosed verifiable, quality, some who could not pass an intro statistics course started the managerial techniques that became six-sigma, and once again statistical control and industrial statistical design of experiments is languishing. But then, as indicated by this article, for even six-sigma to work properly requires a level of empathy that MBAs have destroyed.

As an older experienced employee in an engineering department I am skeptical of the Six-Sigma process. I look forward to seeing someone do a green belt analysis on whether green belt analysis works. So far the times I have been sucked into the green belt goat rodeos nobody has bothered to research anything and the conclusions are obvious to anyone who has been on the floor, such as water is wet and flows downhill.

Are there publications of Demings’ “popular” works on business management? I looked him up during his boom, and what I found was a serious textbook on sampling techniques, and then a lot of slide decks and lecture notes published by his disciples. All worthy things, but no guidebook to the use of statistical techniques.

ISTR a ground bass of “You’ll never understand this, you fools. Hire a statistician already!” Maybe that’s why there were no publications.

But that was then. Have the times changed?

As I mentioned, a great intro to statistical process control is the Western Electric Statistical Quality Control Handbook. I think it is now put out by AT&T. Other sources can be found at the American Society for Quality. They used to have quite a nice intro book that takes you from understanding variability and distributions into various run charting and into sampling. There are several books on process optimization using statistical design of experiments, but that is better once one has an understanding of applied stats and modeling. However, it, and practical matters like stack-up of tolerances are covered by Western Electric.

I hesitate to recommend some of the canned software unil one has a grasp of the operations. I have seen some that allow illigitemate operations.

But, all that said, a fantastic free reference from the NIST is their handbook of engineering statistics. https://www.itl.nist.gov/div898/handbook/

Thank you. I’ve put the handbook in my daily surfing bookmarks. I’ll try a daily study shot, see where it gets me.

As long as his lessons live on in countries where they were accepted to begin with, such as Japan perhaps, then no; W. Edwards Deming did not live in vein. Or in vain, either.

D’oh!

One of the few books I have reread. I shall put it on the list again.

A GE Production manager was once quoted as saying “Doing the job right is no reason not to meet cost and schedule.”

there’s only what you do and how you do it. One must be aware of both. Associates, strategy, and all the rest follow from process & quality (inclusive ‘and’)

5000 orders with deposits made and fewer than 400 delivered on the corvair of airplanes (apologies and sorrow to ralph nader) because let’s face it, if it was an easy fix it would be done by now, which means complicated solution which means more problems added to the issue of who’s going to board one spells serious problems to this observer.

If empathy and trust are necessary for building critical complex components, capitalism is by nature unsuited to these tasks.

Empathy and trust cannot exist in an environment where employees can be fired at the whim of management. 49 states in the US have “at will” employment. An employer can fire for no reason or for any reason. It’s against the law to fire someone solely because they are a member of a protected class, but any other excuse (none is needed) is valid. There are laws on the books about whistleblowing. They’re essentially never enforced. And they require situations with actual law-breaking. Building a culture of callous disregard for human life doesn’t qualify.

Being an engineer who insists on quality is a fireable offense.

In the US, the safety net is virtually nonexistent, which means employees have an even stronger motivation to obey. But even if the safety net were stronger, the moment the employee is gone, management has free reign to discard safety and common sense in pursuit of delicious bonuses. And so management fires anyone who objects and the product trundles along until it kills hundreds of people. The market is concentrated — capitalism again — and so there are no alternatives, no quality actors, no makers with empathy exist anywhere.

This happens in industry after industry — airplanes, pharma, medicine, construction. No matter how many lives are at stake. When management has the ability to fire employees at will, no trust is possible. Empathy only exists until the first round of layoffs. Then the illusion of trust shatters.

Boeing couldn’t fire their union employees, but the old engineers weren’t protected by a contract. So that’s what management did. That’s how they succeeded in destroying a great American instutition, arbitraging Boeing’s former reputation to enrich themselves personally.

Per Kalecki, management will always seek to preserve the ability to fire and will always try to undermine legislative attempts to remove the whip hand. This isn’t something that can be solved by passing a law, it’s a fundamental systemic problem. Capitalism is unsuited to systems that maintain human life. The more complicated our society becomes, the more visible the rot becomes.

Thanks very much for this comment.

Boeing is jacked up enough that their engineers are unionized.

Society of Professional Engineering Employees in Aerospace

http://www.speea.org/

“capitalism is by nature unsuited to these tasks.” – No, but it all depends on definitions and the extent (scope) they apply to. I was the coo of a just enough, just in time steel plant. I dont know about trust, to some extent that’s what standards are for, and statistical quality control. But, I will say “goodwill” is absolutely necessary. Part of that is doing what you signed up to do. Places were everyone hates everyone else, are terrible and usually the result is ‘awful’.

Businesses can be set up and run in ways that do not undermine empathy. I am part of a process of starting a fully ethical company right now. We have encoded our ethics into our DNA, setting up as a social purpose corporation, where we place social, environmental and community impacts level with profits.

It’s making it easy to choose the best way of working, from the beginning. (Deciding to be a net producer of energy, for example, not just an energy consumer. Vetting our supply chain for fair and safe social and environmental practices, not just price).

Did you read the sequel?

Lila: An Inquiry into Morals

Great book. To me his best. I use it as a text book.

A tour de force, a precis of the festering rot in American capitalism and business culture. Boeing is one data point in a very large set, not a unique case. Thank you for this posting.

An excellent and informative read, thank you for posting.

And thank You to the author for writing this up !

Be afraid people, be very afraid. My son-in-law , a neuro-surgeon was recruited to fill a vacancy created by another surgeon leaving for a more lucrative position . Well it didn’t work out so well for him so he approached the hospital that employed him previously ( where my son-in-law occupies his seat ) with the enticement to the hospital administrators that he would be coming back with his patient database , Well guess what ? They rehired him. Why ? For the money he could bring in. No other reason. The author is more than right to talk about empathy in the specific sense in which he uses the word . My son-in-law would say that in his hospital there is none . Just ‘ teams ‘ . I was so encouraged to see the author use this word ‘ teams ‘ in a derogatory sense because it is driving me to distraction that almost every interaction with a corporation is with a ‘ team ‘ i.e. nobody, no personality, no personal responsibility . It untethers us all from one another. And that’s quite deliberate on the part of the corporation . I have a mantra about this ‘ They want your money, but they don’t want you ‘ .

That’s how big law firms work. Recruit amoral jerks with a “big book of business.” “You eat what you kill.” Not a great model for, e.g., surgeons…

Thanks JTM. I always enjoy your astute comments.

Agreed. Well put. Be hard for me to have the amoral or in my thinking immoral jerks in the family.

Isn’t this the same “expert” who acquired 2000 hours flying Cessnas? This software guy spends many many paragraphs explaining that the Max software was badly designed. No kidding, and also not news and already thoroughly discussed. While he is undoubtedly correct that machines are superior to people when performing routine tasks–the philosophy pursued by Airbus–it’s also true that the many variables involved in flight mean a human backup is essential and that has traditionally been the philosophy pursued by Boeing and indeed the airline industry in general.

In any case the Max is grounded, its reputation damaged if not destroyed and its hard to see that an article like this one brings much to the table other than to gratify the author’s need to showoff.

This is a cheap shot. Our author is a pilot of Cessnas (which have many of the same basic and well-proven instruments, such as artificial horizons, as the largest airliners). All pilots now flying in the cockpits pf our airliners got their basic training flying small airplanes like Cessnas. Basic aerodynamic principals like angle of attack are common to all aircraft.

But more importantly, since Boeing’s problems are rooted in their failed software based MCAS system, he is a software engineer!

Yes so basic that even I know about them. Surely the issue now is not the MCAS which will be fixed or simply removed but rather the airplane itself. And I’d suggest that on that we’d be far better served by commentary from pilots or aeronautical engineers.

Sorry and take no offense, but being a pilot and an engineer, but also a systems theorist, I say as one, neither are particularly trained to look at the entirety of any problem. That would be the program manager or architect. And most important some one who writes well. That was an excellent post. NC is wonderful well at explaining why businesses fail, core competencies here. Boeing is toast as a company. It should be broken up and sold off for scrape. No future in commercial aviation as it now constituted. The unhinged climate and all. The goal isn’t carbon neutral it is none at all. At 7.6% reduction per year, starting now. How we doing?

Cessna airplanes are not all small, or single engine, or even flyable with a general aviation pilots license. See: The 303 series Crusader, the 400 series and above, especially the Citation series (corporate jet).

(Aside: Was the “brown pilot” acknowledgement in the post a poke in the ribs at the NC avatar “737 Pilot” ?)

It’s not a cheap shot if it is true.

Many third world countries do not have healthy cockpit cultures and many

third world airlines do not invest in training their pilots.

My experience has been to the contrary. Pilots in the third world are very well trained, diligent and looked upon with respect.

Cessna 172 according to this

https://aircraft-data.com/owner/travis-gregory-r

(via DuckDuckGo…happy to be corrected)

It’s their most basic model. I have friends who have flown one–ubiquitous in any small airport.

Agreed that it is a cheap shot. Perhaps I know little and read more slowly, but it seemed to integrate the workings of the airplane with different ideas about automation structure, what software does within that structure and a corporate disregard of effective quality engineering. Perhaps I’m naive, but when I see a well structured argument that also mirrors and expands upon other objective assessments of Boeing’s failings, I take notice. The attack, a backhand use of an argument from authority, seems like hot air.

Nobody is defending Boeing here. But I don’t think it’s unreasonable to expect that those who are offering up expertise on airplanes are actually experts on airplanes and especially, in this case, on large jet liners.

Thanks Carolinian! Was wondering how long before you showed your colours on this. And you – of all people – must know this is false: “Nobody is defending Boeing here.”

By that definition, then how does anyone write about anything without being first an ‘expert’. Journalists don’t work that way, the follow a process of their own that yields the truth with being an expert at anything, other then knowing how to make sense of a story, tell the facts and speak the truth.

Different strokes for different folks perhaps, but this critique seems unusually sour grapes for you. As to covering a subject already well covered, that seems to be all economists and historians and, come to think of it, just about everyone, seem to do anyway, at least for a large part of the time. And we often get pleasure and occasionally better understanding out of it.

Agree that this comment is a cheap shot. I worked as a test pilot at Edwards and have a good grasp on this discussion from a hardware and pilot perspective. Software killed people and crashed jets while I was in flight test. Software production is a high skill/high risk area that is very difficult to control. The insight here is that the hardware architecture may not support safe software.

If true, Boeing is doomed.

Completely agree with the “empathy “ discussion. Cannot develop new planes without both risks and accountability. However, empathy is the way you navigate through the fear factor that goes with risk.

Software also killed people in Airbus jets–something the author doesn’t mention.

And that “hardware architecture” is really the crux of the issue rather than software which can be easily fixed if properly tested (something Boeing clearly did not do). If the Max is really such a poor hardware design it’s difficult to see how it was able to fly for a year with only two crashes which, by all accounts, were caused by the software. Indeed using the author’s own metric the failure ratio–absent the MCAS–for the Max as hardware would also be zero.

My comment was not a cheap shot but merely a complaint that we don’t need such a long article to tell us something we already know.

Software can’t necessarily be “easily fixed”. It might seem on the face of it easier than fixing hardware, but it often proves not to be the case at all. See also: banking core platforms.

I can’t imagine the amalgamated FCC/Autopilot systems on a 737 are any less complicated on an input/output/scenario basis than a small banks transactional needs, and those have been defeating extremely well funded and oversized teams for decades.

I suspect you’re making the same mistake you’re arguing against – experts talking outside their book assume simplicity through lack of understanding. My experience has been that everything that seems straightforward is stupidly complicated, once you learn a little more about it.

Simple in the sense that we know and always have known why those planes crashed–the AOA sensor malfunctioned and the MCAS only used one. Yes you can comb over the airplane and try to find all the other things that are supposedly wrong (best done IMO by an airplane engineer, not blog commentators), but empirically that’s what we do know at this point.

“always have known” or learned after the fact

petard meet hoist

The author’s link to the Nader family’s role in this is also interesting.

Greg,

This is exactly the point. Software is difficult to fix because it’s so easy to make. With a flick of a few keystrokes it is trivial to import millions of lines of code from frameworks, libraries, etc. — most of which the person importing does not understand. That induces Normal Failure in software systems at a rate that would be impossible in hardware systems.

Boeing fixing the EDFCS-730 software most likely will make the software more, not less, prone to failures, more of which will be deadly. There is no free lunch.

“My comment was not a cheap shot but merely a complaint that we don’t need such a long article to tell us something we already know.”

Please don’t presume to speak for me (and other readers of NC).

Washington Post published earlier a business article; “NASA finds ‘fundamental’ software problems in Boeing’s Starliner spacecraft”.

NASA/Boeing found a second software bug after launch failed to get the spacecraft the correct orbit. They had to update the software to get it back to earth. More problems were found later. NASA admits to inadequate oversight.

You cannot separate the hardware from the software. But two or more different “teams” must work together to make it work. They didn’t. The reason is deregulation. There is no government oversight to force corrections. Also, not seeing any problems allowed corporate managers to pocket more bonus money.

The only way this will be fixed is to use the correct term; “corruption”. And, jail corporate managers who are responsible for killing 346 people. It is manslaughter.

The cheap shots were your personal attacks on the author (questioning expertise, questioning motive)

If the article was too long for you fine. I thought the empathy angle was an interesting.

+1

That commenter could simply choose not to read the Boeing pieces..

+10

I really got a lot out of this article. It was written very lucidly, engaging and illuminating. A rare combination. It tied many disparate threads together and gave an original, insightful viewpoint.

+1

Thanks, Jerri-Lynn!

Me too New Wafer. I appreciated the discussion of the bicameral design of the aircraft’s control systems. If it is truly baked into the 737 design as the author argues, then one shouldn’t fight it. Given that, one can see that the way to fix the MCAS properly must include adding multiple sensors on each side.

What this makes me think of most is coronovirus. In the PRC local officials only move up by hiding problems from their superiors or simply “not having them” they have, in the author’s terms, no empathy with those under them or even with their peers. As a consequence the most natural reaction to a new virus is to arrest the doctors. Boeing, it seems, is not far off.

Suggest variant of Godwin’s Law has arisen re CCP/coronavirus.

re: “Boeing, ironically, estimates that eighty-percent of all commercial airline accidents are due to so-called “pilot error.” I am sure that if Boeing’s communication department could go back in time, they’d like to revise that to 100%.”

Every pilot worth his/her E6B–I’m a dormant Commercial Pilot–accepts this responsibility implicitly, just like the captain of a ship (who, at one time, was expected to ‘go down with the ship’ whether s/he was at fault or not). Even if the wings fall off in straight-and-level flight, a conscientious pilot will tell you that he/she should have spotted the problem in pre-flight. It just goes with the responsibility of being a pilot.

re: “The Airbus consortium, long a leader in advancing the technological sophistication of aviation (they succeeded with Concorde where Boeing had utterly failed with their SST, for example), realized this. And in the late 1970s embarked on a program to create the first “fly by wire” aircraft, the A320.”

Technically not true:

“The first pure electronic fly-by-wire aircraft with no mechanical or hydraulic backup was the Apollo Lunar Landing Research Vehicle, first flown in 1964.”

Source (among others):

https://www.flyingmag.com/aircraft/jets/fly-by-wire-fact-versus-science-fiction/

(although I suspect the author meant the ‘first commercial airliner’) The F-16–aka ‘The Electric Jet’–which, I believe is the first FBW figher aircraft was flying around the time of the A320 (if not before).

And Boeing didn’t ‘fail’ with the SST, between environmentalists and skeptical legislators the project was canned for lack of funding:

https://en.wikipedia.org/wiki/Boeing_2707

I’m not defending Boeing here, but in the long run even the Concorde proved economically unfeasible.

As I understood it, the Concorde failed because in a pique of jealousy, the States wouldn’t allow the Concord to land anywhere but in NYC and possibly one other airport, I forget, but the restrictions made the aircraft economically impossible. France (and England) continued to support it for years largely because of national pride, quite justified technically, but outlandishly expensive and impossible to refine over time towards profitability and/or environmental acceptability under the restricted circumstances.

The Concorde stopped flying because of low passenger numbers (the crash in 2000 in Paris had something to do with that), and because it wasn’t efficient flying in the subsonic range which was what is required over US cities.

There is a difference between why the Concord failed and why it stopped; the latter being the accumulated outcome of the former. The Concord’s failure started the minute we outlawed it from flying over US land and that was decades before 2000 and the crash. As to efficiency, that’s a hard issue to solve when you’re restricted to one or two airports in the first place. It’s easy to forget that at that time the US was basically the only country in the world with a large enough market of air ports to provide the impetus for development and refinement of the Concord in particular and the supersonic range for passenger aircraft in general. Tricky Dick was president and wouldn’t tolerate the French and British leap frogging us in technology simply because we were already beginning to measure things purely in financial terms.

It may be arguable that the Concord never would have succeeded regardless, but that is moot in that it and what ever offspring it might have spawned never got a chance.

Being supersonic the airplane created sonic booms which restricted it to flight over oceans. It apparently was also quite noisy without the booms which led to protests by people who lived near airports. In other words there were practical objections to SSTs in general and they are also fuel hogs which became more of a factor after the 70s oil crisis.

There was also a high profile crash of a Concorde while taking off in Paris and this was another blow to the plane’s success. At any rate I’m not sure “pique” had much to do with it.

I got the distinct feeling at the time of airspace restrictions on the Concord of a sentiment, particularly held by Nixon, that if we couldn’t do it with the Boeing 2707, France sure as hell wasn’t going to get a viable shot at it with the Concord.

I’ll grant that Congress was the impetus behind the restrictions, ironically because of noise and ozone pollution (imagine pollution rather than profit being a factor in our current day congress), and that was probably what doomed the Concord, but for many of us not familiar at the time with the issues, it did seem like pique.

As to the crash, huh? Several decades of Concord flight over the Atlantic occurred before the crash. They stopped manufacturing parts for the Concord long before the crash and were cannibalizing them from a standby, meaning they had no intention of continuing the program.

I was very young when America’s own “SST” was being suggested and maybe even worked on till a successful environmental movement was able to stop work on this potential sonic boomblaster flying around all over America.

So when Concorde was invented, it wasn’t jealousy which motivated the American public to prevent the Concorde from flying its sonic boomblasting self all over America. It was rage and hate that we would have such a destructive technology foisted on us after we had thought we killed it in its SST guise.

IIRC, the Americans were part of the SST race and the American manufacturers had a consortium working on the design. They decided to attempt a “swing wing” design (where the wings are in a “typical” airplane position during takeoff and then rotate closer to the body line once in flight in order to increase speed).

After a couple of billion dollars (I think–which was real money at the time) and numerous pre-orders, both domestic and international, the designers realized that the plane as conceived could either have wings or accommodate passengers, but that it could not do both. This led the American manufacturers to abandon SST, leaving the Concorde (And its unstable Russian knockoff) the only option for high speed civil transport.

And yes, the American manufacturers then lobbied hard using (justified, IMO) environmental pretexts to prevent supersonic flight over continental US. Jealousy is probably not the correct emotion driving such lobbying–rather it was the American airline manufacturers’ very strong concern of being effectively locked

out of their own domestic market–after all, who would want to spend 10+ hours flying from NY to LA when a supersonic plane could let you do this in under half the time?